Category Archives: algorithms

The entertaining platform for technology and design, TED, posted a talk by Area/Code chairman and co-Founder Kevin Slavin, entitled “How algorithms shape our world”:

There are a couple of interesting examples and ideas in there and the analogy between finance algorithms and the larger processing of “culture” is well argued. A fun 15 minutes – there’s even explosions in there!

In the beginning, it was all about the algorithm. PageRank and its “no humans involved” mantra dominated Google since its inception. In recent years however, Google has started to expand the role of “conceptual” knowledge in different areas of its services. The main search bar and its capacity to do all kinds of little tricks is a good example, but I was really quite astounded how seamless concept integration has become on my last trip to Google Translate:

In August 2010, Edinburgh Sociologist Donald MacKenzie (whose book An Engine, not a Camera is an outstanding piece of scholarship) wrote an article in the Financial Times titled Unlocking the Language of Structured Securities where he discusses a software suite for financial analysis called Intex and compares it to a language that allows to see and interact with the world in certain ways rather than others. MacKenzie describes his first encounter with Intex as a moment of revelation that quickly turned into doubt:

The psychological effect was striking: for the first time, I felt I could understand mortgage-backed securities. Of course, my new-found confidence was spurious. The reliability of Intex’s output depends entirely on the validity of the user’s assumptions about prepayment, default and severity. Nevertheless, it is interesting to speculate whether some of the pre-crisis vogue for mortgage-backed securities resulted from having a system that enabled neophytes such as myself to feel they understood them.

While MacKenzie does not go as far as imputing the recent financial crisis to a piece of software, he points out that Intex is not recursive in its mode of analysis: when evaluating a complex financial asset, for example one of the now (in)famous CDOs that are made up of other assets, themselves combining further values, and so on, Intex does not follow the trail down to the basic entities (the individual mortgage) but calculates risk only from the rating of the asset in question. MacKenzie argues that Goldman-Sachs’ 2006 decision to basically get out of mortgage-based securities may well be a result of their commitment to go beyond available tools by implementing a (very costly) “bottom-up” approach that builds its evaluation of an asset by calculating up from the basic units of value. The card-house character of these financial instruments could become visible by changing tools and thereby changing perspective or language. Software makes it possible to implement very different practices or languages and to make them pervasive; but how does a company chose one strategy over another? What are the organizational and “cultural” factors that lead Goldman-Sachs to change its approach? These may be the truly challenging questions here, although they may never get answered. But they lead to a methodological lesson.

The particular strength of systems like Intex lies in their capacity to black-box evaluation strategies behind a neat interface that allows users to immediately operate on the underlying models, weaving these models into their decisions and practices. Conceptually, we understand the ways in which software shapes action better and better but the empirical complexity of concrete settings is positively daunting even outside of the realm of financial markets. What I take from MacKenzie’s work is that in order to understand the role of software, we have to be very familiar with the specific terrain a system is embedded in, instead of bringing overarching assumptions to the table. Software is a means for building structure and this building is always happening in particular organizational settings that are certainly caught up in larger trends but also full of local challenges, politics, and knowledge. Programs are at the same time structuring backdrop practice and part of a strategic repertoire that actors dispose of.

The case of financial software indicates that market behavior standardizes around available tools which leads to the systemic delegation of certain decision processes to software makers. This may result in a particular type of herd behavior and potentially in imbalance and crisis. Somewhat ironically, it is Goldman-Sachs that showed the potential of going against the grain by questioning programmed wisdom. That the company recently paid $550M in fines for abusing their analytical advantage by betting against a CDO they were selling to customers as an investment indicates that ethics and cunning are unfortunately two pair of shoes…

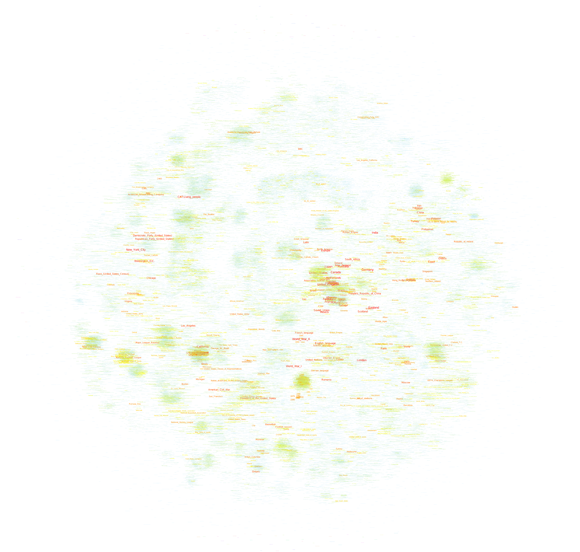

After trying to map the French version of Wikipedia a couple of days ago, I’ve played around with the much bigger English version (the dbpedia file I worked with contains 130M links between Wikipedia pages in a cool 20GB) this week-end and thanks to a rare lucid moment I was able to transform that thing into a .gdf that is small enough to be opened in gephi. I settled for the 45K pages with the most links (undirected) and started mapping. All three maps I built use the OpenOrd layout algorithm (1000 iterations). The first uses the modularity measure for “community” detection and colors text accordingly (click on the image for a very large version):

The second uses a grey color scale to express the degree (number of links) of a page:

Finally, the same map, but with a different color scale (light blue => yellow => red):

Every version helps with certain readability issues and you can download all tree of the maps as a big .psd so you can easily switch between the different modes.

When comparing these maps with their French counterpart, there are several things than are quite remarkable:

- Most importantly, there is no cluster that I would qualify as “common culture” or “shared knowledge”. There is most certainly a large, dense zone at the center but while the French one draws in all kinds of topics, this version has worldwide country information only. I would prudently argue that the English version of Wikipedia shows a more globalized picture of the world, even if there is a large zone of pages on the left that deals with the United States. It’s a bigger and more heterogeneous world that emerges, but there still is a dominant player.

- Sports is even bigger on the English version and typically American sports (Baseball, NASCAR, etc.) show up on the left in smaller, denser clusters compared to the gigantic football (soccer) area on the center to bottom right.

- The Sciences are smaller but entertainment (TV, popular music, comic books, video games, etc.) is much more present. At least at this level of observation.

- There are some seriously “strange” clusters, such as the dense yellow zone on the far right halfway between top and center that shows a group of Russian painters I have never heard of. Not that I’m an expert but I’ve found little trace of any other painters. This shows the weakness of my selection method by link degree – if there was a way to select nodes by page-views, the results would probably be very different, at least for our Russian painters. But it also shows that despite having become a rather respectable Encyclopedia with a quite classic subject outlook, Wikipedia still is a space for off-the-track topics and for communities that are so passionate about a certain subject that they will groom it and grow it.

I plan on releasing the scripts used to build these maps in the future but I want to try out a couple more things before that, most particularly a version that only takes into account in-links, which should reduce the presence of certain “distributor” pages (“events in 2010″,”people alive”, etc.).

Edit: a map of the English Wikipedia is here.

Wikipedia is a fascinating object for way too many reasons. The way it is produced, the place it has taken in society, it’s size and evolution, and many other aspects are truly remarkable. Studying Wikipedia has become a discipline in itself and while there may be certain signs of fatigue on the editing front, there is still much to learn and to discover. I have recently started to take an interest in looking at the way knowledge is structured in different contexts and the availability of certain tools and datasets makes Wikipedia a perfect object for scrutiny. If it just wasn’t that big. Still, it’s the 21st century and computers are getting really fast, so why not try mapping Wikipedia. All of it.

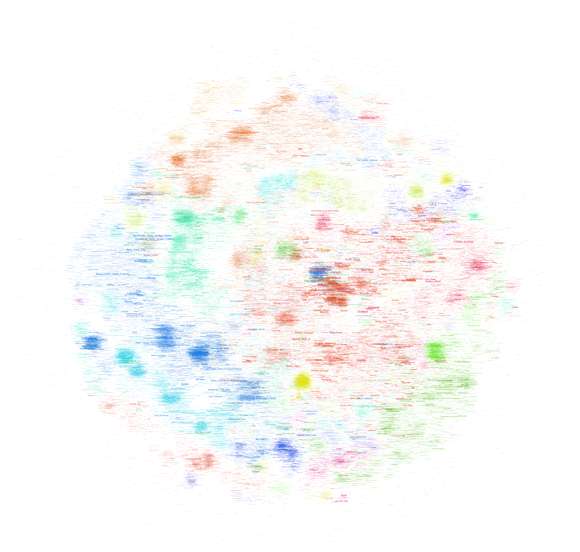

There are different ways to start such a project, but simply taking the link structure is probably the most obvious. This allows for bypassing the internal taxonomy and may lead to a more “organic” expression of underlying knowledge structures. Unfortunately, computers are not that fast – at least not mine – and so I had to make two concessions: I took a non English variant (I settled for French) and reduced the number of nodes to a (barely) manageable amount. The final graph file (.gdf – do not even think about working with it with less than 4GB of RAM) was built by taking pages that had at least 100 connections with other pages. From an initial 183K pages and 11.5M links I went down to a more manageable 40K and 2M respectively. To make things workable, I chose to visualize the page names only, no nodes, no edges. The result looks like this (click on the image for a very big .png):

Reliable gephi did not only do the graph layout (OpenOrd plugin, 1000 iterations) but dutifully detected “communities” in the network, which actually did work really well. And here is a version in elegant grayscale, this time without community detection:

The graph shows a big dense zone in the middle that is quite unreadable but composed out of world history, politics, geography, and other elements that constitute a core set of knowledge elements that are highly interlinked. While France plays and important role here, these elements are actually very globalized and include countries from all over the world. Could we interpret this as a field of “common” or “shared” knowledge? A set of topics that transcend specialization and form the very core of what our culture considers essential?

To the close right of the very center, there is a rather visible (in orange) cluster on the United States. Around the center you’ll find major historic events and periods (WWII, middle ages, renaissance, etc.). The arts are on the right (mostly music) and France’s most popular art form – Cinema – starts at the top right, in a highly dense orange cluster and goes to the top left, tellingly fusing with theatre. The Sciences form a rather strange blue band the goes from the center top to the top right.

And then there is sports. I was a bit surprised by how much of it there is and how well the clustering and community detection works for identifying individual fields – football, tennis, car racing, and so on. The second surprise was how few “geek” subjects appear on the map. There is a digital technology cluster on the top right but I haven’t found any traces of the legendary Star Trek cluster. In the end, French Wikipedia appears to be a rather classic encyclopedia if you look at it from a subject angle. Could we use such maps to compare subject prominence between cultures?

Obviously, the method for mapping Wikipedia has to be refined to make maps more readable but the results are actually already quite telling. Let’s see whether the same approach can work for the English version – which is a cool 10 times bigger…

The Official Google Blog has recently written about changes to the ranking procedure that were introduced after a NYT article wrote about an online retailer that had apparently found out that being nasty to your customers would help getting good search rankings because all of the complaints and bad user reviews would get you links and boost PageRank. While Google denies that this logic would work, they have added a ranking layer to their search results that specifically targets online merchants. The interesting thing about the blog post is that the author details several things that the company could have done but didn’t do while actually revealing very little about what the “algorithmic solution” they implemented actually consists of. From the post:

Instead, in the last few days we developed an algorithmic solution which detects the merchant from the Times article along with hundreds of other merchants that, in our opinion, provide an extremely poor user experience. The algorithm we incorporated into our search rankings represents an initial solution to this issue, and Google users are now getting a better experience as a result.

While I do not believe that transparency is the prime solution to the gatekeeper issues surrounding search, this paragraph really is strikingly vague. Has Google compiled a list of merchants that are systematically downranked? How is this list compiled? What does “in our opinion” mean? Is this “opinion” expressed in the form of an algorithmic procedure (one could imagine using the hReview microformat to collect reviews on merchants)?

We’ll probably not get any answers to these questions but the case really shows how murky the whole ranking thing really has become: in an always growing online world, search visibility has extremely important financial ramifications (despite the social media hype) and I believe that companies like Google will increasingly rely on human judgment as a complement to algorithmic procedures (which are just another form of human judgment BTW). This will certainly lead to more legal activity around ranking in the future because courts still understand human meddling a lot better than software design…

What is a link? From a methodology standpoint, there is no answer to that question but only the recognition that when using graph theory and associated software tools, we project certain aspects of a dataset as nodes and others as links. In my last post, I “projected” authors from the air-l list as nodes and mail-reply relationships as links. In the example below, I still use authors as nodes but links are derived from a similarity measure of a statistical analysis of each poster’s mails. Here are two gephi graphs:

If you are interested in the technique, it’s a simple similarity measure based on the vector-space model and my amateur computer scientist’s PHP implementation can be found here. The fact that the two posters who changed their “from:” text have both of their accounts close together (can you find them?) is a good indication that the algorithm is not completely botched. The words floating on the links on the right graph are the words that confer the highest value to the similarity calculation, which means that it is a word that is relatively often used by both of the linked authors while being generally rare in the whole corpus. Elis Godard and Dana Boyd for example have both written on air-l about Ron Vietti, a pastor who (rightfully?) thinks the Internet is the devil and because very few other people mentioned the holy warrior, the word “vietti” is the highest value “binder” between the two.

What is important in networks that are the result of heavily iterative processing is that the algorithms used to create them are full of parameters and changing one of these parameters just little bit may (!) have larger repercussions. In the example above I actually calculate a similarity measure between each two nodes (60^2 / 2 results) but in order to make the graph somewhat readable I inserted a threshold that boils it down to 637 links. The missing measures are not taken into account in the physics simulation that produces the layout – although they may (!) be significant. I changed the parameter a couple of times to get the graph “right”, i.e. to find a good compromise between link density for simulation and readability. But look at what happens when I grow the threshold so than only the 100 strongest similarity measures survive:

First, a couple of nodes disconnect, two binary stars form around the “from:” changers and the large component becomes a lot looser. Second, Jeremy Hunsinger looses the highest PageRank to Chris Heidelberg. Hunsinger had more links when lower similarity scores were taken into account, but when things get rough in the network world, bonding is better than bridging. What is result and what is artifact?

Most advanced algorithmic techniques are riddled with such parameters and getting a “good” result not only implies fiddling around a lot (how do I clean the text corpus, what algorithms to look for what kind of structures or dynamics, what parameters, what type of representation, here again, what parameters, and so on…) but also having implicit ideas about what kind of result would be “plausible”. The back and forth with the “algorithmic microscope” is always floating against a backdrop of “domain knowledge” and this is one of the reasons why the idea of a science based purely on data analysis is positively absurd. I believe that the key challenge is to stay clear of methodological monoculture and to articulate different approaches together whenever possible.

The Association of Internet Researchers (AOIR) is an important venue if you’re interested in, like the name indicates, Internet research. But it is also a good primary source if one wants to inquire into how and why people study the Internet, which aspects of it, etc. Conveniently for the lazy empirical researcher that I am, the AOIR has an archive of its mailing-list, which has about 22K mails posted by 3K addresses, enough for a little playing around with the impatient person’s tool, the algorithm. I have downloaded the data and I hope I can motivate some of my students to build something interesting with it, but I just had to put it into gephi right away. Some of the tools we’ll hopefully build will concentrate more on text mining but using an address as a node and a mail-reply relationship as a link, one can easily build a social graph.

I would like to take this example as an occasion to show how different algorithms can produce quite different views on the same data:

So, these are the air-l posters with more than 60 messages posted since 2001. Node size indicates the number of posts, a node’s color (from blue to red) shows its connectivity in the graph (click on the image to see a much larger version). Link strength, i.e. number of replies between two people, is taken into account. You can download the full .gdf here. The only difference between the four graphs is the layout algorithm used (Force Atlas, Force Atlas with attraction distribution, Yifan Hu, and Fruchterman Reingold). You can instantly notice that Yifan Hu pushes nodes with low link count much more strongly to the periphery than the others, while Fruchterman Reingold as always keeps its symmetrical sphere shape, suggesting a more harmonious picture than the rest. Force Atlas’ attraction distribution feature will try to differentiate between hubs and authorities, pushing the former to the periphery while keeping the latter in the center; just compare Barry Wellman’s position over the different graphs.

I’ll probably repeat this experiment with a more segmented graph, but I think this already shows that layout algorithms are not just innocently rendering a graph readable. Every method puts some features of the graph to the forefront and the capacity for critical reading is as important as the willingness for “critical use” that does not gloss over the differences in tools used.

If we want to understand the plethora of very specific roles computers play in today’s world, the question “What is software?” is inevitable. Many different answers have been articulated from different viewpoints and different positions – creator, user, enterprise, etc. – in the networks of practices that surround digital objects. From a scholarly perspective, the question is often tied to another one, “Where does software come from?”, and is connected to a history of mathematical thought and the will/pressure/need to mechanize calculation. There we learn for example that the term “algorithm” is derived from the name of the Persian mathematician al-Khwārizmī and that in mathematical textbooks from the middle ages, the term algorism is used to denote the basic arithmetic techniques – that we now learn in grammar school – which break down e.g. the calculation of a multiplication with large numbers into a series of smaller operations. We learn first about Pascal, Babbage, and Lady Lovelace and then about Hilbert, Gödel, and Turing, about the calculation of projectile trajectories, about cryptography, the halt-problem, and the lambda calculus. The heroic history of bold pioneers driven by an uncompromising vision continues into the PC (Engelbart, Kay, the Steves, etc.) and Network (Engelbart again, Cerf, Berners-Lee, etc.) eras. These trajectories of successive invention (mixed with a sometimes exaggerated emphasis on elements from the arsenal of “identity politics”, counter-culture, hacker ethos, etc.) are an integral part for answering our twin question, but they are not enough.

A second strand of inquiry has developed in the slipstream of the monumental work by economic historian Alfred Chandler Jr. (The Visible Hand) who placed the birth of computers and software in the flux of larger developments like industrialization (and particularly the emergence of the large scale enterprise in the late 19th century), bureaucratization, (systems) management, and the general history of modern capitalism. The books by James Beniger (The Control Revolution), JoAnne Yates (Control through Communication and more recently Structuring the Information Age), James W. Cortada (most notably The Digital Hand in three Volumes), and others deepened the economic perspective while Paul N. Edwards’ Closed World or Jon Agar’s The Government Machine look more closely at the entanglements between computers and government (bureaucracy). While these works supply a much needed corrective to the heroic accounts mentioned above, they rarely go beyond the 1960s and do not aim at understanding the specifics of computer technology and software beyond their capacity to increase efficiency and control in information-rich settings (I have not yet read Martin Campell-Kelly’s From Airline Reservations to Sonic the Hedgehog, the title is a downer but I’m really curious about the book).

Lev Manovich’s Language of New Media is perhaps the most visible work of a third “school”, where computers (equipped with GUIs) are seen as media born from cinema and other analogue technologies of representation (remember Computers as Theatre?). Clustering around an illustrious theoretical neighborhood populated by McLuhan, Metz, Barthes, and many others, these works used to dominate the “XY studies” landscape of the 90s and early 00s before all the excitement went to Web 2.0, participation, amateur culture, and so on. This last group could be seen as a fourth strand but people like Clay Shirky and Yochai Benkler focus so strongly on discontinuity that the question of historical filiation is simply not relevant to their intellectual project. History is there to be baffled by both present and future.

This list could go on, but I do not want to simply inventory work on computers and software but to make the following point: there is a pronounced difference between the questions “What is software?” and “What is today’s software?”. While the first one is relevant to computational theory, software engineering, analytical philosophy, and (curiously) cognitive science, there is no direct line from universal Turing machines to our particular landscape with the millions of specific programs written every year. Digital technology is so ubiquitous that the history of computing is caught up with nearly every aspect of the development of western societies over the last 150 years. Bureaucratization, mass-communication, globalization, artistic avant-garde movements, transformations in the organization of labor, expert movements in public administrations, big science, library classifications, the emergence of statistics, minority struggles, two world wars and too many smaller conflicts to count, accounting procedures, stock markets and the financial crisis, politics from fascism to participatory democracy,… – all of these elements can be examined in connection with computing, shaping the tools and being shaped by them in return. I am starting to believe that for the humanities scholar or the social scientist the question “What is software?” is only slightly less daunting than “What is culture?” or “What is society?”. One thing seems sure: we can no longer pretend to answer the latter two questions without bumping into the first one. The problem for the author, then, becomes to choose the relevant strands, to untangle the mess.

In my view, there is a case to be made for a closer look at the role the library and information sciences played in the development of contemporary software techniques, most obviously on the Internet, by not exclusively. While Bush’s Memex has perhaps been commented on somewhat beyond its actual relevance, the work done by people such as Eugene Garfield (citation analysis), Calvin M. Mooers (information retrieval), Hans-Peter Luhn (KWIC), Edgar Codd (relational database) or Gerard Salton (the vector space model) from the 1950s on has not been worked on much outside of specialist circles – despite the fact that our current ways of working with information (yes, this includes your Facebook profile, everything Google is doing, cloud computing, mobile applications and all the other cool stuff Wired writes about) have left behind the logic of the library catalog quite some time ago. This is also where today’s software comes from.

My colleague Theo Röhle and I went to the Computational Turn conference this week. While I would have preferred to hear a bit more on truly digital research methodology (in the fully scientific sense of the word “method”), the day was really quite interesting and the weather unexpectedly gorgeous. Most of the papers are available on the conference site, make sure to have a look. The text I wrote with Theo tried to structure some of the epistemological challenges and problems to take into account when working with digital methods. Here’s a tidbit:

…digital technology is set to change the way scholars work with their material, how they “see” it and interact with it. The question is, now, how well the humanities are prepared for these transformations. If there truly is a paradigm shift on the horizon, we will have to dig deeper into the methodological assumptions that are folded into the new tools. We will need to uncover the concepts and models that have carried over from different disciplines into the programs we employ today…