Category Archives: softwareproject

I am sick this weekend and that’s a justification to stay in bed and play around with the computer a bit. Over these last weeks, I was thinking that it may be interesting to get back to the aging netvizz application and make some direly needed revisions and updates, especially concerning some of the quantitative measures concerning individual users’ activity. Maintenance work is not fun, however, so I decided to add a new feature instead: the bipartite like network.

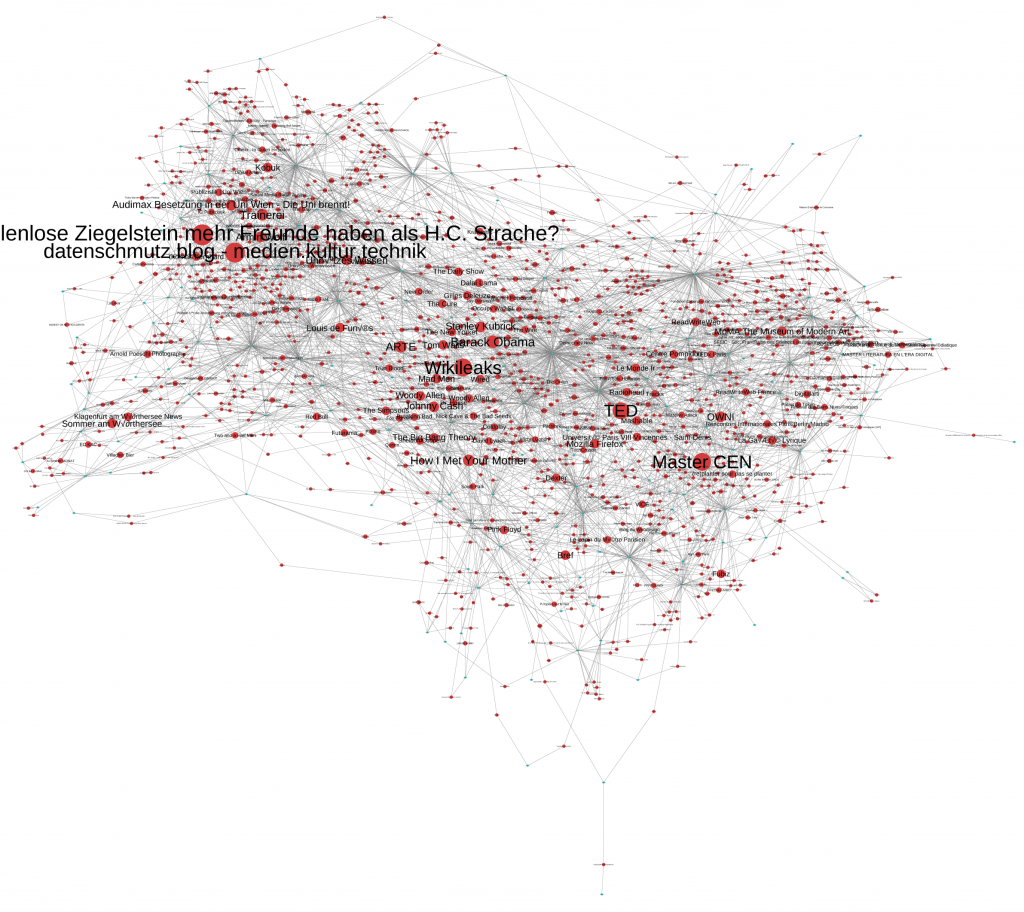

The idea is pretty simple: instead of graphing friend relationships between users, the new output basically just throws users and likes (liked pages that is – external objects are not available through the API) into the same graph. If a user likes something a link is created. That’s also how Facebook’s opengraph architecture works on the inside. The result – done with gephi – is pretty interesting though (click for bigger image):

The small turquoise dots are users and the bigger red ones liked objects. I eliminated users that did not like anything (or have strong privacy settings), as well as all things liked by a single person only. The data field “likesize” in the output file indicates how often an object has been liked and makes it possible to size likes separately from users (the “type” field distinguishes the two). It is not surprising that, at least in my case, the network of friendship connections and the like network are quite similar. People from Austria do not like the same things as my French friends – although there is a cluster of international stuff in the middle: television shows, music, wikileaks, and so on; these things cannot be clearly attributed to a user group.

One can actually use the same output file for something quite different. The next image shows the same graph but with nodes sized for number of connections (degree). This basically shows the biggest “likers” (anonymized for the purpose of this post) in the network and still keeps the grouped by similar like patterns.

The new feature is already live and can be tried out. If you want to do more than make pretty pictures, I highly recommend checking out the work by my colleagues Carolin Gerlitz and Anne Helmond on what they call the “like economy”.

The new feature is already live and can be tried out. If you want to do more than make pretty pictures, I highly recommend checking out the work by my colleagues Carolin Gerlitz and Anne Helmond on what they call the “like economy”.

And now back to bed.

When it comes to analyzing and visualizing data as a graph, we most often select only one unit to represent nodes. When working with social networks, nodes commonly represent user accounts. In a recent post, I used Twitter hashtags instead and established links by looking at which hashtags occurred in the same tweets. But it is very much possible to use different “ontological” units in the same graph. Consider this example from the IPRI project (a click gives you the full map, a 14MB png file):

Here, I decided to mix Twitter user accounts with hashtags. To keep things manageable, I took only the accounts we identified as journalists that posted at least 300 tweets between February 15 and April 15 from the 25K accounts we follow. For every one of those accounts, I queried our database for the 10 hashtags most often tweeted by the user. I then filtered the graph to show only hashtags used by at least two users. I was finally left with 512 user accounts (the turquoise nodes, size is number of tweets) and 535 hashtags (the red nodes, size is frequency of use). Link strength represents the frequency with which a user tweeted a hashtag. What we get, is still a thematic map (libya, the regional elections, and japan being the main topics), but this time, we also see, which users were most strongly attached to these topics.

Mapping heterogeneous units opens up many new ways to explore data. The next step I will try to work out is using mentions and retweets to identify not only the level of interest that certain accounts accord to certain topics (which you can see in the map above), but the level of echo that an account produces in relation to a certain topic. We’ll see how that goes.

In completely unrelated news, I read an interesting piece by Rocky Agrawal on why he blocked tech blogger Robert Scoble from his Google+ account. At the very end, he mentions a little experiment that delicious.com founder Joshua Schachter did a couple of days ago: he asked his 14K followers on Twitter and 1.5K followers on Google+ to respond to a post, getting 30 answers the former and 42 from the latter. Sitting on still largely unexplored bit.ly click data for millions of urls posted on Twitter, I can only confirm that Twitter impact may be overstated by an order of magnitude…

There are many different ways of making sense of large datasets. Using network visualization is one of them. But what is a network? Or rather, which aspects of a dataset do we want to explore as a network? Even social services like Twitter can be graphed in many different ways. Friend/follower connections are an obvious choice, but retweets and mentions can be used as well to establish links between accounts. Hashtag similarity (two users who share a tag are connected, the more they share, the closer) is yet another method. In fact, when we shift from interactions to co-occurrences, many different things become possible. Instead of mapping user accounts, we can, for example, map hashtags: two tags are connected if they appear in the same tweet and the number of co-occurrences defines link strength (or “edge weight”). The Mapping Online Publics project has more ideas on this question, including mapping over time.

In the context of the IPRI research project we have been following 25K Twitter accounts from the French twittersphere. Here is a map (size: occurrence count / color: degree / layout: gephi with OpenOrd) of the hashtag co-occurrences for the 10.000 hashtags used most often between February 15 2011 and April 15 2011 (clicking on the image gets you the full map, 5MB):

The main topics over this period were the regional elections (“cantonales”) and the Arab spring, particularly the events in Libya. The japan earthquake is also very prominent. But you’ll also find smaller events leaving their traces, e.g. star designer Galliano’s antisemitic remarks in a Paris restaurant. Large parts of the map show ongoing topics, cinema, sports, general geekery, and so forth. While not exhaustive, this map is currently helping us to understand which topics are actually “inside” our dataset. This is exploratory data analysis at work: rather than confirming a hypothesis, maps like this can help us get a general understanding of what we’re dealing with and then formulate more precise questions from there.

I just saw that the good people from sociomatic have prepared a nice little slideshow on how to use gephi to analyze social network data extracted from Facebook (using netvizz). This is a great way to start playing around with network analysis and the slides should really help with the first couple of steps…

The Association of Internet Researchers (AOIR) is an important venue if you’re interested in, like the name indicates, Internet research. But it is also a good primary source if one wants to inquire into how and why people study the Internet, which aspects of it, etc. Conveniently for the lazy empirical researcher that I am, the AOIR has an archive of its mailing-list, which has about 22K mails posted by 3K addresses, enough for a little playing around with the impatient person’s tool, the algorithm. I have downloaded the data and I hope I can motivate some of my students to build something interesting with it, but I just had to put it into gephi right away. Some of the tools we’ll hopefully build will concentrate more on text mining but using an address as a node and a mail-reply relationship as a link, one can easily build a social graph.

I would like to take this example as an occasion to show how different algorithms can produce quite different views on the same data:

So, these are the air-l posters with more than 60 messages posted since 2001. Node size indicates the number of posts, a node’s color (from blue to red) shows its connectivity in the graph (click on the image to see a much larger version). Link strength, i.e. number of replies between two people, is taken into account. You can download the full .gdf here. The only difference between the four graphs is the layout algorithm used (Force Atlas, Force Atlas with attraction distribution, Yifan Hu, and Fruchterman Reingold). You can instantly notice that Yifan Hu pushes nodes with low link count much more strongly to the periphery than the others, while Fruchterman Reingold as always keeps its symmetrical sphere shape, suggesting a more harmonious picture than the rest. Force Atlas’ attraction distribution feature will try to differentiate between hubs and authorities, pushing the former to the periphery while keeping the latter in the center; just compare Barry Wellman’s position over the different graphs.

I’ll probably repeat this experiment with a more segmented graph, but I think this already shows that layout algorithms are not just innocently rendering a graph readable. Every method puts some features of the graph to the forefront and the capacity for critical reading is as important as the willingness for “critical use” that does not gloss over the differences in tools used.

When it comes to search interfaces, there are a lot of good ideas out there, but there is also a lot of potential for further experimentation. Search APIs are a great field for experimentation as they allow developers to play around with advanced functionality without forcing them to work on a heavy backend structure.

Together with Alex Beaugrand, a student of mine, I have built (a couple of month ago) another little search mashup / interface that allows users to switch between a tag cloud view and a list / cluster mode. contextDigger uses the delicious and Bing APIs to widen the search space using associated searches / terms and then Yahoo BOSS to download a thousand results that can be filtered through the interface. It uses the principle of faceted navigation to shorten the list : if you click on two terms, only the results associated with both of them will appear…

Since I have started to play around with the latest (and really great, easy to use) version of the gephi graph visualization and analysis platform, I have developed an obsession to build .gdf output (.gdf is a graph description format that you can open with gephi) into everything I come across. The latest addition is a Facebook application called netvizz that creates a .gdf file describing either your personal network or the groups you are a member of.

There are of course many applications that let you visualize your network directly in Facebook but by being able to download a file, you can choose your own visualization tool, play around with it, select and parameter layout algorithms, change colors and sizes, rearrange by hand, and so forth. Toolkits like gephi are just so much more powerful than Flash toys…

my puny facebook network - gephi can process much larger graphs

What’s rather striking about these Facebook networks is how much the shape is connected to physical and social mobility. If you look at my network, you can easily see the Klagenfurt (my hometown) cluster to the very right, my studies in Vienna in the middle, and my French universe on the left. The small grape on the top left documents two semesters of teaching at the American University of Paris…

Update: v0.2 of netvizz is out, allowing you to add some data for each profile. Next up is GraphML and Mondrian file support, more data for profiles, etc…

Update 2: netvizz currently only works with http and not https. I will try to move the app to a different server ASAP.

After having finished my paper for the forthcoming deep search book I’ve been going back to programming a little bit and I’ve added a feature to termCloud search, which is now v0.4. The new “show relations” button highlights the eight terms with the highest co-occurrence frequency for a selected keyword. This is probably not the final form of the feature but if you crank up the number of terms (with the “term+” button) and look at the relations between some of the less common words, there are already quite interesting patterns being swept to the surface. My next Yahoo BOSS project, termZones, will try to use co-occurrence matrices from many more results to map discourse clusters (sets of words that appear very often together), but this will need a little more time because I’ll have to read up on algorithms to get that done…

PS: termCloud Search was recently a “mashup of the day” at programmeableweb.com…

Winter holidays and finally a little bit of time to dive into research and writing. After giving a talk at the Deep Search conference in Vienna last month (videos available here), I’ve been working on the paper for the conference book, which should come out sometime next year. The question is still “democratizing search” and the subject is really growing on me, especially since I started to read more on political theory and the different interpretations of democracy that are out there. But more on that some other time.

One of the vectors of making search more productive in the framework of liberal democracy is to think about search not merely as the fasted way to get from a query to a Web page, but to think about how modern technologies might help in providing an overview on the complex landscape of a topic. I have always thought that clusty – a metasearcher that takes results from Live, Ask, DMOZ, and other sources and organizes them in thematic clusters – does a really good job in that respect. If you search for “globalisation”, the first ten clusters are: Economic, Research, Resources, Anti-globalisation, Definition, Democracy, Management, Impact, Economist-Economics, Human. Clicking on a cluster will bring you the results that clusty’s algorithms judge as pertinent for the term in question. Very often, just looking on the clusters gives you a really good idea of what the topic is about and instead of just homing in on the first result, the search process itself might have taught you something.

I’ve been playing around with Yahoo BOSS for one of the programming classes I teach and I’ve come up with a simple application that follows a similar principle. TermCloud Search (edit: I really would like to work on this some more and the name SearchCloud was already taken, so I changed it…) is a small browser-based app that uses the “keyterms” (a list of keywords the system provides you with for every URL found) feature of Yahoo BOSS to generate a tagcloud for every search you make. It takes 250 results and lets the user navigate these results by clicking on a keyword. The whole thing is really just a quick hack but it shows how easy it is to add such “overview” features to Web search. Just try querying “globalisation” and look at the cloud – although it’s just extracted keywords, a representation of the topic and its complexity does emerge at least somewhat.

I’ll certainly explore this line of experimentation over the next months, jQuery is making the whole API thing really fun, so stay tuned. For the moment I’m kind of fascinated by the possibilities and by imagining search as a pedagogical process, not just a somewhat inconvenient stage in accessing content that has to be speeded up by personalization and such. Search can become in itself a knowledge producing (not just knowledge finding) activity by which we may explore a subject on a more general level.

And now I’ve got an article to finish…