Category Archives: surveillance

EDIT (23/01/2015): Changed some text to make clear that you can still run Netvizz by grabbing the source code, registering a new app, and running it in developer mode.

EDIT (25/01/2015): I have written a small install guide for the source code on github. I’m unfortunately unable to help with individual problems, if you’re unfamiliar with server administration, your department’s tech support team should be able to help.

EDIT (28/01/2015): Since Facebook has changed the way apps are created, you can apparently no longer run just scripts requiring extended permissions in newly created apps, even in developer mode (making my source code useless for you). I have therefore whipped up a version of Netvizz that can only do pages and groups without requiring extended permissions. Since this does not have to go through review, you can use the app directly here.

EDIT (29/01/2015): Facebook’s policy review has accepted the new version of Netvizz (with personal network functions removed) and the app is again accessible here. API v1.0 is still going to be retired in April and this may pose problems, but this is something for another day.

EDIT (02/05/2015): API v1.0 has now been retired, but a new version of Netvizz (v1.2) has survived the changes and should continue functioning in the foreseeable future. Personal and group friendship networks are gone for good.

Original Post:

Today Netvizz, an app that allows researchers to download data from the Facebook platform, was suspended by the company and I received a mail explaining why:

Your app is violating the following Platform Policies:

Platform Policy Section 1: Build a quality product.

Platform Policy 1.1: Build an app that is stable and easily navigable.Platform Policy 3.3: Only use friend data (including friends list) in the person’s experience in your app.

To clarify, your app should be stable and easy to use and shouldn’t stall escessively. Additionally, you should not allow friend data export, even if that data is anonymized. You can access the full list of our Platform Policies here: https://developers.facebook.com/policy/.

Since Facebook has recently been very preoccupied with app privacy – for very good reasons actually – this does not come as a surprise. I have been anticipating API changes and the retirement of version 1.0 that comes with some very sensible changes in how data is delivered to platform apps for a while. Apps are clearly one of the biggest problems when it comes to Facebook’s privacy puzzle and most changes make a lot of sense. As Bernie Hogan wrote here, friendship connections are one of the casualties, as they will no longer be available to apps at all (v2.2 no longer makes them available). I was hoping to stall a little by moving to API v2.0, which still runs until April 2016, but this seems no longer viable after this morning’s news. As much as I agree with the general changes Facebook is making, I think it is a real shame that the analytical possibilities apps like Netvizz afford will no longer be available to researchers.

Over its roughly five year life span, what started as an inquiry into Facebook’s API, ultimately had over 60K unique users and analyzing their friendship network has been the start into graph analysis for many people. GetNet, a modified version of Netvizz, was used by Lada Adamic in her highly successful Coursera MOOC, allowing students to look at a network they are intimately familiar with, making network visualization much more tangible. GetNet actually still works, but will probably break in April 2015, if not shut down earlier.

For me personally, Netvizz has been a ambivalent project. On the one side, I enjoyed the tinkering with the API, but on the other, maintaining a complex tool in my spare time has often been a challenge. As anybody who offers software online for free will tell you, the mass of not always friendly emails can be daunting. I’m also not a computer scientist and I work in a humanities department, where technical work does not really count in performance reviews.

But the real problem with the current situation has little to do with me and much more with the many courses and research projects that have been relying on Netvizz. They are left out in the cold. So here are some elements that will hopefully help them deal with the situation:

- Despite my hesitation to make software public that can be used very easily to download large amounts of non-anonymized data, there is so much code already in the wild that another set of scripts is not going to make much of a difference. I’m therefore making Netvizz’ source code publicly available.

This should allow research projects relying on Netvizz to take the source code, register their own app at developers.facebook.com and run it in developer mode (just to make this clear, since I am the developer, I can actually still run the app, but it is no longer publicly available), which should work until April 30, 2015, the day v1.0 of the API retires.I apologize for the crappy code quality, this is one of those projects that grow and grow and never get a real redesign. - I will try to enter into further communication with Facebook to see what can be done, but I don’t expect much from that.

- If that does not work, I will submit a version of Netvizz version for review that excludes personal network features and focuses on pages and groups. It’s still going to “stall excessively”, though, since it gets a lot of data.

I have no idea how long any of this make take. In the meantime, check out this list for alternatives, most of which hopefully still work. But make no mistake: this may well be the beginning of the end for external Facebook research with digital methods.

One of the reasons I started to develop the netvizz application, was to get better insights into how Facebook envisions exchange of data and functionality with third party developers. From the beginning, I was quite amazed how much data a third-party app could actually get from the platform – not only about the users that actually install an app, but also about their friends and the groups they are members of. I hope to provide a systematic account of what I’ve learned at some point in the future. But today, I want to discuss a particular element in some more detail, the “read_stream” permission.

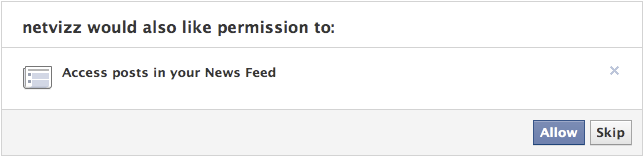

To introduce the matter, a couple of points concerning the Facebook APIs as such: every application written by a third-party developer requires a logged in user and this user defines the “scope” of data access the running instance of the application can get – remember that applications are generally used by many users, so the data gleaned from individual scopes can be combined. Applications have to explicitly ask for permission to access certain items and Facebook provides extensive documentation on the permission system, the profile properties, and a set of extended permissions. Users are asked to grant these permissions when they first start an app. This is the permission dialogue for netvizz:

Netvizz currently asks for the following permissions: user_status, user_groups, friends_likes, user_likes, and read_stream. When installing, you cannot refuse individual elements that are not considered “extended permissions”, only decide to not use the app at all. The user_status is actually superfluous and will be removed in the next iteration. The user_groups permission is needed to access group data and both _likes permissions are used for netvizz’ like network functionality.

Now, working on a couple of new features over the last months, I started to get more interested in posts because they have probably become the closest thing to a “carrier of publicness” on the Facebook platform. I was quite amazed how easy it was to extract large numbers of users and (some) of their data from pages – both likes and comments users make on post on or by pages are in principle up for grabs. When doing some housekeeping recently, I noticed that some of the “engagement” metrics netvizz had provided for users’ friends in earlier versions were either broken or outdated and I decided to simply count the number of likes and posts friends make to replace the older metrics. I expected to only be able to read likes – through the friends_likes permission – and public posts. This was indeed true: in the beginning, all I got were public posts. Because I could get much more data through the Graph API Explorer, a developer sandbox that asks for all permissions by default (which can be changed, a great way to explore the permission structure), I discovered the read_stream permission.

The read_stream permission is presented by Facebook in the following way: “Provides access to all the posts in the user’s News Feed and enables your application to perform searches against the user’s News Feed.” It is a so-called “extended permission”, the developer doc noting that “Extended Permissions give access to more sensitive info and the ability to publish and delete data”. And, indeed, when asking for read_stream in netvizz, I suddenly got access to many more posts made by my friends, mostly going from “none” to “a lot”. From what I could gather after some random testing was that I basically got access to all of the activities from my friends that would show up in my newsfeed, without the “top stories” filter. Because many things have the status of “post”, I could get a rather detailed (and timestamped) account of what my friends are doing on the platform. You can check out your own “posts” feed by following this link into the Graph API Explorer. Because comments and likes by users who you are not friends with on posts by somebody you are friends with also show up in your news feed, the read_stream permission allows to capture their activity as well. Facebook seems to be aware of this: because read_stream is an extended permission it gets its own permission dialogue and can actually be skipped:

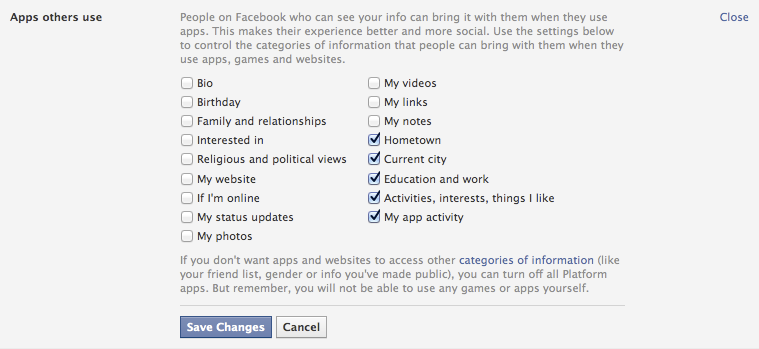

This is a good thing, but the wording seems a bit sparse: “Posts in your newsfeed” actually translates to “a minute account of your friends’ activities”. Granted, buried in the privacy settings is an option that allows us to modify more generally what information we share with the apps other people use, and these are the default settings:

It’s the “Activities, interests, things I like” option that allows the read_stream permission to work its magic. The people I am friends with on the platform are generally a rather privacy conscious bunch, but I could get the posts from most of them.

This is not a privacy scandal of any sort, measures are in place, but one can still make a couple of points:

- Apps as means for data capture are clearly not discussed enough. For serious data collection, however, going through the API is clearly the way to go and we need to pay more attention to this.

- Again and again: defaults matter. As seen above, the data available to apps used by friends is quite extensive with default settings.

- Again and again: language matters. The read_stream permission dialogue is certainly not explicit enough. Also: why is “app privacy” not in the privacy tab here?

- When we log into a third party site with our Facebook login, we are basically running an app. May be worth pondering what data we are shipping over.

Exploring APIs as important actors in the privacy debate and beyond is crucial. It’s often complicated work, though, and I hope that the developer community can help with that work a bit. It would be highly useful, I think.

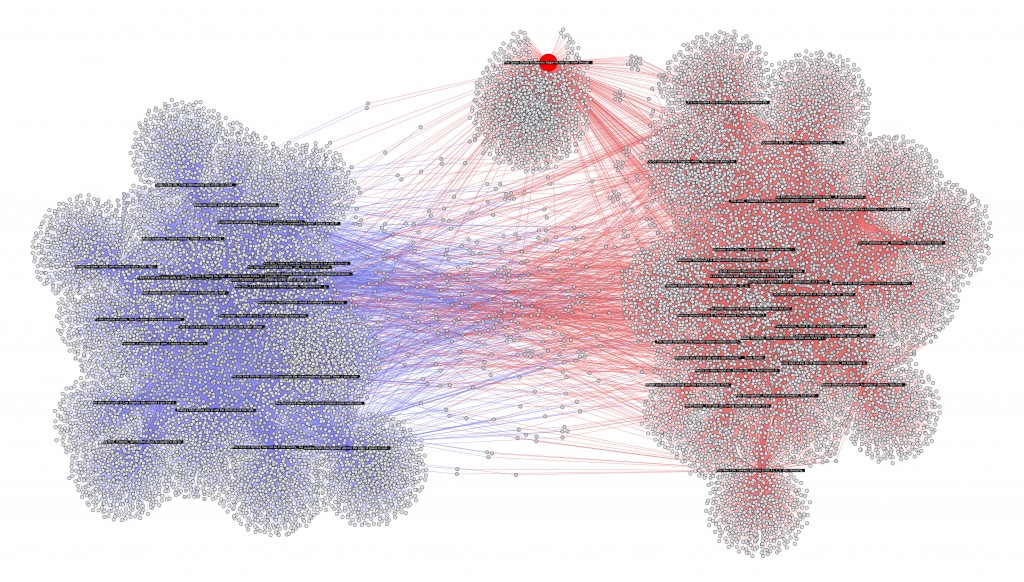

In my last post, I previewed a feature that I am currently building into netvizz: posts and users that comment and like them are thrown together into a bipartite graph. In this approach, it is easy to combine data from different pages, here from the 30 latest posts of the New York Times and the Wall Street Journal, plotting 27K users (bigger image behind the click):

The app will start spitting out more metrics in the next version, but it’s easy to see from the gephi graph that the NY Times (red) has a bit more users (grey) than the WSJ (blue). There is a bit of overlap in terms of (active) audience, but in general, there seem to be quite distinct populations of the short span the data covers. Interestingly, one post – talking about the space shuttle Endeavor – is a true outlier: it has succeeded in capturing a less “specific” audience.

As this method could be applied to a potentially infinite number of pages, this is really becoming quite problematic in terms of privacy. I have cut the labels for users, but they are in the data. I am unsure about this for the moment, but this feature may not make it in full into the next version.

German publisher Heise Verlag is an international curiosity. It publishes a small number of highly influential computer-related magazines that give a voice to a tech ethos that is at the same time extremely competent in the subject matter (I’ve been a steady subscriber to c’t magazin for over 15 years now, and I am still baffled sometimes just how good it is) and very much aware of the social and political implications of computing (their online magazine Telepolis testifies to that).

Data protection and privacy are long-standing concerns of the heise editors and true to a spirit of society-oriented design, they have introduced a concept as well as a technical implementation of a two-step “like” button. Such buttons, by Facebook or other companies, have of course become a major vector of user-tracking on the Web. By using an iframe, every button loads some code from Facebook’s server and sends the referring url (e.g. http://nytimes.com/articlename/blabla) as an information. The iframe being hosted on the facebook.com domain, cross-site privacy protections can be circumvented, the url information connected to an identifier cookie and, consequently, to a user account. Plugins like the Priv3 project block these mechanisms but a) users have to have a heightened level of awareness to even consider installing something like this and b) the plugin interferes with convenient functions like Google search preferences.

Heise’s suggestion, which they already implemented on their own sites, is simple: websites can download a small bit of code that implements a two-step procedure: the “like” button is greyed out after the page first loads and there is no tracking happening. A first click on the button loads the “real” Facebook code, and the second click provides the usual functionality. The solution is very simple to implement and really a very minor inconvenience. Independently from the debate whether “like” buttons and such add any real value to the Web, this example shows that “social” features like these can be designed in a way that does not necessarily lead to pervasive user tracking.

Heise’s suggestion, which they already implemented on their own sites, is simple: websites can download a small bit of code that implements a two-step procedure: the “like” button is greyed out after the page first loads and there is no tracking happening. A first click on the button loads the “real” Facebook code, and the second click provides the usual functionality. The solution is very simple to implement and really a very minor inconvenience. Independently from the debate whether “like” buttons and such add any real value to the Web, this example shows that “social” features like these can be designed in a way that does not necessarily lead to pervasive user tracking.

The echo to this initiative has been very strong (check the Slashdot discussion here), especially in Germany, where privacy (or rather Datenschutz, a concept less centered on the individual but rather on the role of data in society) is an intensely debated issue, due to obvious historical reasons. Facebook apparently threatened to blacklist heise.de at a point, but has since then backpedaled. After all, c’t magazin prints around 600.000 issues of every number and is extremely influential in the German (and Dutch!) computer landscape. I am very curious to see how this story unfolds, because let’s be clear: Facebook’s earning potential is closely tied to its capacity to capture, enrich, and analyze user data.

This initiative – and the Heise ethos in general – underscores that a “respectable” and sober engineering culture does not exclude an explicit normative stance on social and political issues. And is shows that this stance can be translated into technical models, implemented, and shared, both as an idea and as code.

When it comes to scrutinizing companies for their actions and policies concerning control over information, privacy issues, and market dominance in areas related to public debate, large media conglomerates have been the traditional objects of analysis. More recently, Internet giants such as Google and Facebook have been critically examined and when the hype levels off, Twitter will probably be the next on the list. Malcolm Gladwell’s recent piece in The New Yorker may very well be an indicator of things to come.

Whether the issues related to “social media” are important or not, I have the feeling that the debate overshadows questions and problem fields that may in fact be much more important. The most obvious case, in my view, is the debate on privacy on Facebook. While the matter is not irrelevant, I think that e.g. present and future state-run information systems such as the french EDVIGE, a central police database that assembles all kinds of personal information concerning select persons “of interest”, have been overshadowed by debate on whether your employer can see the pictures that document your drinking binges after somebody (you?) put them on the ‘Book. There is a certain disequilibrium in how Internet researchers and critics distribute their attention that has allowed all kinds of things to pass below the radar. But there is one event that has really shook me up recently, both because of its importance and the lack of outcry it garnered, at least in my echo chamber: the acquisition of the Reuters group by the Thomson corporation in 2008 and the creation of Thomson Reuters, an information giant second to none.

I have stumbled upon Thomson Reuters a couple of times over the last years: first, when I researched the history of citation indexing, I learned that Thomson Scientific had bought the Institute of Scientific Information (and their Web of Science citation index megabase from which things like the notorious Impact Factor are calculated) in 1992; then again when I noticed that the ClearForest API for term extraction had be renamed, remodeled, and rebranded as OpenCalais after Reuters bought the company in 2007; finally, last year, when I noticed that the Reuters video platform appeared more and more often in articles and links. When I finally started to look a little closer (NYSE:TRI) I was astounded to find a company with a market cap of $31B, annual revenues of $13B, and 55K+ employees all over the world. Yes, this is no Apple big, but still very, very big for a company that sells information.

I knew Reuters from my studies in communication science as the world’s biggest news agency (with roughly one and a half competitors: Associated Press and Agence France Presse) but I had never consciously registered the Thomson company – a Canadian Family business that went from the media (owning the London Times at one point) to publishing before transforming itself in a rather risky move into a digital information broker for all kinds of special fields (legal, health, finance, etc.). Reuters was a perfect match and I really wonder how that merger went through without too much hassle from the different regulatory bodies. Even more so when I found out that Reuters actually had devised a very spicy regulatory clause when it made its IPO in 1984: to avoid control over such a central source of information, no single shareholder would be allowed to hold more than 15% of the companies stocks. Apparently, that clause was enacted at least once when Murdoch’s News Corporation (already holding 15%) bought a competitor that also owned a piece of Reuters and consequently had to shed stock to stay below the threshold. The merger effectively brought the new Reuters Thomson under full control (53%) of The Woodbridge Company, a private holding that represents the Thomson family.

Such control over a news agency (and the many more specialized services that are part of the giant’s portfolio) should give us pause in the best of times when media companies are swimming in resources, are able to pay good money for good journalism, and keep their own network of correspondents. But recent years have seen nothing but cost cutting in journalism, which has led to an even greater reliance on news agencies. I wager that Google News would work a lot less well if people actually started to write their own copy instead of remodeling Reuters’ and AP send outs.

But despite these rather traditional – but nonetheless crucial – concerns over media ownership and control, there is a second point that is somewhat closer to my area of expertise. I have recently been thinking a lot about how to best phrase criticism of the assumption that digital networks necessarily lead to decentralization. Thomson Reuters – but also other information giants such as Google and Facebook – is a great example for how digital technologies can lead to quite impressive cost reductions for economies of scale and, consequently, market concentration. These arguments should be taken into account:

- While the barriers of entry to the Internet are really low (you can have your own blog in minutes), scaling up to millions of visitors is a real challenge. Building your own datacenter is a real bump in the learning curve and to get over it, you need to make certain investments. But once you pass that bump, scaling suddenly becomes cheaper again because you have the knowledge ressources and experience that can now be applied to make the datacenter grow. One of Google’s strengths lies in this area and this immensely facilitates branching out into new information ventures. The same goes for Thomson Reuters: they master platform technology and distribution technologies for all kinds of contents and they can build on that mastery to add new things to serve information to a globalized planet. To use the language of the long tail: there may be more special interest information that can find an audience with shelve space becoming effectively unlimited; but there is also no longer a need for more than one shelve.

- The same goes for a more elusive matter: the mastery of information. The database techniques and indexing tools we use to store information – as well as the search and data-mining algorithms – can be very easily transported from one domain to the next. While it may be (very) difficult to create useful search tools for medical information, once you have built them it is rather easy to adapt these tools to, let’s say the legal domain. Again, this is what makes Google strong: basic search technology can be applied to advertising, books, mail, product prices, and even video if you can do automatic transcription. With the acquisition of ClearForest, Thomson Reuters has class-leading in-house data-mining and this is not something you can get by simply posting a couple of job ads in the local newspaper. Data-mining is extremely useful in areas where fast decision-making is crucial but also when it comes to building powerful search tools. Again, these techniques can be applied to any number of fields and once you have the basics right you can just add new domains with very little cost.

These two points go a far way in explaining why the Internet has seen the lightning fast emergence of network giants over the last couple of years. I really don’t want to postulate yet another “law” of the Net but I believe that there is something to this idea of the bump: it’s easy to have a basic presence on the Web but it’s hard to scale up to a large audience and to use advanced computational techniques; but one you pass the bump, the economies of scale kick in and from there it seems like there are no barriers to growth. The Thomsons have certainly made that bet when they acquired Reuters and so far, it seems to work out quite nicely for them.

I hope we can find a means to extend critique from questions of ownership into the heart of the (informational) beast and come up with better ways to understand how the still ongoing shift to exclusively digital information affords new means of handling and exploiting that information – with organizational, economic, and political consequences. While that work is starting to take shape for consumer companies like Google that are in the spotlight, there is surprisingly little on invisible network giants like Thomson Reuters that cater mostly to professional clients.

Programmable web just pointed to a really interesting mashup competition. Sunlight labs announced the Apps for America contest and the idea is to attract programmers that will use a series of data APIs to “make Congress more accountable, interactive and transparent”. Among the criteria two stand out:

- Usefulness to constituents for watching over and communicating with their members of Congress

- Potential impact of ethical standards on Congress

The design goal is accountability and that indeed is a perfect case for society oriented design. While people in Europa love to scold the US for their lack of data protection and privacy laws, just looking at the APIs the contest proposes to use makes me salivate for something similar in France. If you look at the Capitol Words API for example, just imagine the kind of discourse analysis one could build on that. Representations of what is said in Congress that make the data digestable and bring at least some of the debate potentially closer to citizens. The whole thing is just a really great idea…

Continuing in the direction of exploring statistics as an instrument of power more characteristic of contemporary society than means of surveillance centered on individuals, I found a quite beautiful citation by French sociologist Gabriel Tarde in his Les Lois de l’imitation (1890/2001, p.192f):

Si la statistique continue à faire des progrès qu’elle a faits depuis plusieurs années, si les informations qu’elle nous fournit vont se perfectionnant, s’accélérant, se régularisant, se multipliant toujours, il pourra venir un moment où, de chaque fait social en train de s’accomplir, il s’échappera pour ainsi dire automatiquement un chiffre, lequel ira immédiatement prendre son rang sur les registres de la statistique continuellement communiquée au public et répandue en dessins par la presse quotidienne.

And here’s my translation (that’s service, folks):

If statistics continues to make the progress it has made for several years now, if the information it provides us with continues to become more perfect, faster, more regular, steadily multiplying, there might come the moment where from every social fact taking place springs – so to speak – automatically a number that would immediately take its place in the registers of the statistics continuously communicated to the public and distributed in graphic form by the daily press.

When Tarde wrote this in 1890, he saw the progress of statistics as a boon that would allow a more rational governance and give society the means to discuss itself in a more informed, empirical fashion. Nowadays, online, a number springs from every social fact indeed but the resulting statistics are rarely a public good that enters public debate. User data on social networks will probably prove to be the very foundation of any business that is to be made with these platforms and will therefore stay jealously guarded. The digital town squares are quite private after all…

When talking about the politics of the social Web and particularly online networking, the first issue coming up is invariably the question of privacy and its counterpart, surveillance – big brother, corporations bent on world dominance, and so on. My gut reaction has always been “yeah, but there’s a lot more to it than that” and on this blog (and hopefully a book in a not so far future) I’ve been trying to sort out some of the political issues that do not pertain to surveillance. For me, social networking platforms are more relevant to politics as marketing rather than surveillance. Not that these tools cannot function quite formidably to spy on people, but it is my impression that contemporary governance relies on other principles more than the gathering of intelligence about individual citizens (although it does, too). But I’ve never been very pleased with most of the conceptualizations of “post-disciplinarian” mechanisms of power, even Deleuze’s Post-scriptum sur les sociétés de contrôle, although full of remarkable leads, does not provide a fleshed-out theoretical tool – and it does not fit well with recent developments in the Internet domain.

But then, a couple of days ago I finally started to read the lectures Foucault gave at the Collège de France between 1971 and 1984. In the 1977-1978 term the topic of that class was “Sécurité, Territoire, Population” (STP, Gallimard, 2004) and it holds, in my view, the key to a quite different perspective on how social networking platforms can be thought of as tools of governance involved in specific mechanisms of power.

STP can be seen as both an extension and reevaluation of Foucault’s earlier work on the transition from punishment to discipline as central form in the exercise of power, around the end of the 18th century. The establishing of “good practice” is central to the notion of discipline and disciplinary settings such as schools, prisons or hospitals serve most of all as means for instilling these “good practices” into their subjects. Jeremy Bentham’s Panopticon – a prison architecture that allows a single guard to observe a large population of inmates from a central control point – has in a sense become the metaphor for a technology of power that, in Foucault’s view, is part of a much more complex arrangement of how sovereignty can be performed. Many a blogpost has been dedicated to applying the concept on social networking online.

Curiously though, in STP, Foucault calls the Panopticon both modern and archaic, and he goes as far as dismissing it as the defining element of the modern mechanics of power; in fact, the whole course is organized around the introduction of a third logic of governance besides (and historically following) “punishment” and “discipline”, which he calls “security”. This third regime is no longer focusing on the individual as subject that has to be punished or disciplined but on a new entity, a statistical representation of all individuals, namely the population. The logic of security, in a sense, gives up on the idea of producing a perfect status quo by reforming individuals and begins to focus on the management on averages, acceptable margins, and homeostasis. With the development of the social sciences, society is perceived as a “natural” phenomenon in the sense that it has its own rules and mechanisms that cannot be so easily bent into shape by disciplinary reform of the individual. Contemporary mechanisms of power are, then, not so much based on the formatting of individuals according to good practices but rather on the management of the many subsystems (economy, technology, public health, etc.) that affect a population so that this population will refrain from starting a revolution. Foucault actually comes pretty close to what Ulrich Beck’s will call, eight years later, the Risk Society. The sovereign (Foucault speaks increasingly of “government”) assures its political survival no longer primarily through punishment and discipline but by managing risk by means of scientific arrangements of security. This not only means external risk, but also risk produced by imbalance in the corps social itself.

I would argue that this opens another way of thinking about social networking platforms in political terms. First, we would look at something like Facebook in terms of population not in terms of the individual. I would argue that governmental structures and commercial companies are only in rare cases interested in the doings of individuals – their business is with statistical representations of populations because this is the level contemporary mechanisms of power (governance as opinion management, market intelligence, cultural industries, etc.) preferably operate on. And second – and this really is a very nasty challenge indeed – we would probably have to give up on locating power in specific subsystems (say, information and communication systems) and trace the interplay between the many different layers that compose contemporary society.

Mashable.com has a piece on Google’s expanding media empire and there is one observation that is actually quite obvious but which I’ve never really thought about:

It becomes pretty clear how Google is going about launching new products or acquiring others: analyzing the most popular topics within its search engine.

People are searching a lot for second life? All right, let’s launch our own 3D virtual world then. Google Trends already exploits search statistics for really simple trend / market analysis but in a dynamic marketplace like the Web the vast amount of search queries Google registers can really be a much more formidable tool for taking society’s pulse. There is no doubt that Google uses this data internally for some heavy market research and I could imagine that the company might license these tools or data to third parties in the future. Nielsen would get some serious competition.

The point I find really interesting about this matter is that Google is mostly criticized for commercially biases search results, their monopoly on online search and the gathering of data that might be used to spy on citizens – I have yet to read something that reflects data collection on users’ search behavior not only as potentially dangerous to individual rights but as a unique tool for corporate strategy. Mining their all knowing logfile might give Google a competitive advantage that other companies simply cannot emulate. Spotting shifts in cultural trends early could give their business planning an asset that money (currently) cannot buy. It would be prudent to convert to Googlism while they still accept new members.

Two things currently stand out in my life: a) I’m working on an article on the relationship between mathematical network analysis and the humanities, and b) continental

Part of the research that I’m looking into is what has been called “The New Science of Networks” (NSN), a field founded mostly by physicists and mathematicians that started to quantitatively analyze very big networks belonging to very different domains (networks of acquaintance, the Internet, food networks, brain connectivity, movie actor networks, disease spread, etc.). Sociologists have worked with mathematical analysis and network concepts from at least the 1930ies but because of the limits of available data, the networks studied rarely went beyond hundreds of nodes. NSN however studies networks with millions of nodes and tries to come up with representations of structure, dynamics and growth that are not just used to make sense of empirical data but also to build simulations and come up with models that are independent of specific domains of application.

Very large data sets have only become available in recent history: social network data used to be based on either observation or surveys and thus inherently limited. Since the arrival of digital networking, a lot more data has been produced because many forms of communication or interaction leave analyzable traces. From newsgroups to trackback networks on blogs, very simple crawler programs suffice to produce matrices that include millions of nodes and can be played around with indefinitely, from all kinds of angles. Social network sites like Facebook or MySpace are probably the best example for data pools just waiting to be analyzed by network scientists (and marketers, but that’s a different story). This brings me to a naive question: what is a social network?

The problem of creating data sets for quantitative analysis in the social sciences is always twofold: a) what do I formalize, i.e. what are the variables I want to measure? b) how do I produce my data? The question is that of building a representation. Do my categories represent the defining traits of the system I wish to study? Do my measuring instruments truly capture the categories I decided on? In short: what to measure and how to measure it, categories and machinery. The results of mathematical analysis (which is not necessarily statistical in nature) will only begin to make sense if formalization and data collection were done with sufficient care. So, again, what is a social network?

Facebook (pars pro toto for the whole category qua currently most annoying of the bunch) allows me to add “friends” to my “network”. By doing so, I am “digitally mapping out the relationships I already have”, as Mark Zuckerberg recently explained. So I am, indeed, creating a data model of my social network. Fifty million people are doing the same, so the result is a digital representation of the social connectivity of an important part of the Internet-connected world. From a social science research perspective, we could now ask whether Facebook’s social network (as database) is a good model of the social network (as social structure) it supposedly maps. This does, of course, depend on what somebody would want to study but if you ask yourself, whether Facebook is an accurate map of your social connections, you’ll probably say no. For the moment, the formalization and data collection that apply when people use a social networking site does not capture the whole gamut of our daily social interactions (work, institutions, groceries, etc.) and does not include many of the people that play important roles in our lives. This does not mean that Facebook would not be an interesting data set to explore quantitatively; but it means that there still is an important distinction between the formal model (data and algorithm, what? and how?) of “social network” produced by this type of information system and the reality of daily social experience.

So what’s my point? Facebook is not a research tool for the social sciences and nobody cares whether the digital maps of our social networks are accurate or not. Facebook’s data model was not created to represent a social system but to produce a social system. Unlike the descriptive models of science, computer models are performative in a very materialist sense. As Baudrillard argues, the question is no longer whether the map adequately represents the territory, but in which way the map is becoming the new territory. The data model in Facebook is a model in the sense that it orients rather than represents. The “machinery” is not there to measure but to produce a set of possibilities for action. The social network (as database) is set to change the way our social network (as social structure) works – to produce reality rather than map it. But much as we can criticize data models in research for not being adequate to the phenomena they try to describe, we can examine data models, algorithms and interfaces of information systems and decide whether they are adequate for the task at hand. In science, “adequate” can only be defined in connection to the research question. In design and engineering there needs to be a defined goal in order to make such a judgment. Does the system achieve what I set out to achieve? And what is the goal, really?

When looking at Facebook and what the people around me do with it, the question of what “the politics of systems” could mean becomes a little clearer: how does the system affect people’s social network (as social structure) by allowing them to build a social network (as database)? What’s the (implicit?) goal that informs the system’s design?

Social networking systems are in their infancy and both technology and uses will probably evolve rapidly. For the moment, at least, what Facebook seems to be doing is quite simply to sociodigitize as many forms of interaction as possible; to render the implicit explicit by formalizing it into data and algorithms. But beware merry people of The Book of Faces! For in a database “identity” and “consumer profile” are one and the same thing. And that might just be the design goal…