Category Archives: technological determinism

This preprint of a paper I have written about a year and a half ago, entitled Institutionalizing without Institutions? Web 2.0 and the Conundrum of Democracy, is the direct result of what I experienced as a major cultural destabilization. Born in Austria, living in France (and soon the Netherlands), and working in a field that has a strong connection with American culture and scholarship, I had the feeling that debates about the political potential of the Internet were strongly structured along national lines. I called this moral preprocessing.

This paper, which will appear in an anthology on Internet governance later this year, is my attempt to argue that it is not only technology which poses serious challenges, but rather the elusive and difficult concept of democracy. My impression was – and still is – that the latter term is too often used too easily and without enough attention paid to the fundamental contradictions and tensions that characterize this concept.

Instead of asking whether or not the Internet is a force of democratization, I wanted to show that this term, democratization, is complicated, puzzling, and full of conflict: a conundrum.

Published as: B. Rieder (2012). Institutionalizing without institutions? Web 2.0 and the conundrum of democracy. In F. Massit-Folléa, C. Méadel & L. Monnoyer-Smith (Eds.), Normative experience in internet politics (Collection Sciences sociales) (pp. 157-186). Paris: Transvalor-Presses des Mines.

While scholars often underline their commitment to non-deterministic conceptions of “effects”, models of causality in the human and social sciences can still be a bit simplistic sometimes. But a more subtle approach to causality would have to concede that, while most often cumulative and contradictory, lines of causation can sometimes be quite straightforward. Just consider this example from Commensuration as a Social Process, a great text from 1998 by Espeland and Stevens:

Faculty at a well-regarded liberal arts college recently received unexpected, generous raises. Some, concerned over the disparity between their comfortable salaries and those of the college’s arguably underpaid staff, offered to share their raises with staff members. Their offers were rejected by administrators, who explained that their raises were ‘not about them.’ Faculty salaries are one criterion magazines use to rank colleges. (p.313)

This is a rather direct effect of ranking techniques on something very tangible, namely salary. But the relative straightforwardness of the example also highlights a bifurcation of effects: faculty gets paid more, staff less. The specific construction of the ranking mechanism in question therefore produces social segmentation. Or does it simply reinforce the existing segmentation between faculty and staff that lead college evaluators to construct the indicators the way they did in the first place? Well, there goes the simplicity…

In August 2010, Edinburgh Sociologist Donald MacKenzie (whose book An Engine, not a Camera is an outstanding piece of scholarship) wrote an article in the Financial Times titled Unlocking the Language of Structured Securities where he discusses a software suite for financial analysis called Intex and compares it to a language that allows to see and interact with the world in certain ways rather than others. MacKenzie describes his first encounter with Intex as a moment of revelation that quickly turned into doubt:

The psychological effect was striking: for the first time, I felt I could understand mortgage-backed securities. Of course, my new-found confidence was spurious. The reliability of Intex’s output depends entirely on the validity of the user’s assumptions about prepayment, default and severity. Nevertheless, it is interesting to speculate whether some of the pre-crisis vogue for mortgage-backed securities resulted from having a system that enabled neophytes such as myself to feel they understood them.

While MacKenzie does not go as far as imputing the recent financial crisis to a piece of software, he points out that Intex is not recursive in its mode of analysis: when evaluating a complex financial asset, for example one of the now (in)famous CDOs that are made up of other assets, themselves combining further values, and so on, Intex does not follow the trail down to the basic entities (the individual mortgage) but calculates risk only from the rating of the asset in question. MacKenzie argues that Goldman-Sachs’ 2006 decision to basically get out of mortgage-based securities may well be a result of their commitment to go beyond available tools by implementing a (very costly) “bottom-up” approach that builds its evaluation of an asset by calculating up from the basic units of value. The card-house character of these financial instruments could become visible by changing tools and thereby changing perspective or language. Software makes it possible to implement very different practices or languages and to make them pervasive; but how does a company chose one strategy over another? What are the organizational and “cultural” factors that lead Goldman-Sachs to change its approach? These may be the truly challenging questions here, although they may never get answered. But they lead to a methodological lesson.

The particular strength of systems like Intex lies in their capacity to black-box evaluation strategies behind a neat interface that allows users to immediately operate on the underlying models, weaving these models into their decisions and practices. Conceptually, we understand the ways in which software shapes action better and better but the empirical complexity of concrete settings is positively daunting even outside of the realm of financial markets. What I take from MacKenzie’s work is that in order to understand the role of software, we have to be very familiar with the specific terrain a system is embedded in, instead of bringing overarching assumptions to the table. Software is a means for building structure and this building is always happening in particular organizational settings that are certainly caught up in larger trends but also full of local challenges, politics, and knowledge. Programs are at the same time structuring backdrop practice and part of a strategic repertoire that actors dispose of.

The case of financial software indicates that market behavior standardizes around available tools which leads to the systemic delegation of certain decision processes to software makers. This may result in a particular type of herd behavior and potentially in imbalance and crisis. Somewhat ironically, it is Goldman-Sachs that showed the potential of going against the grain by questioning programmed wisdom. That the company recently paid $550M in fines for abusing their analytical advantage by betting against a CDO they were selling to customers as an investment indicates that ethics and cunning are unfortunately two pair of shoes…

When Lawrence Lessig famously stated that “code is law”, the most simple and striking example was AOL’s decision to – arbitrarily – limit the number of people that could log into a chat room at the same time to 23. While the social consequences of this rule were quite far-reaching, they could be traced to a simple line of text somewhere in a script stating that “limit = 23;” (apparently someone changed that to “limit = 36;” a bit later).

When starting to work on a data exploration project linking Web sites to Twitter, I wasn’t aware that the microblogging site had similar limitations built in. Somewhere in 2008, Twitter apparently capped the number of people one can follow to 2000. I stumbled over this limit by accident when graphing friends and followers for the 24K+ accounts we are following for our project:

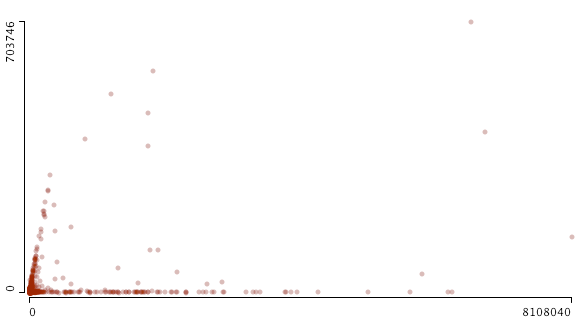

This scatterplot (made with Mondrian, x: followers / y: friends) shows the cutoff quite well but it also indicates that things are a bit more complicated than “limit = 2000;”. From looking at the data, it seems that a) beyond 2000, the friend limit is directly related to the number of followers an account has and b) some accounts are exempt from the limit. Just like everywhere else, there are exceptions to the rule and “all are equal before the law” (UN Declaration of Human Rights) is a standard that does not apply in the context of a private service.

While programmed rules and limits play an important role in structuring possibilities for communication and exchange, a second graph indicates that social dynamics leave their traces as well:

This is the same data but zoomed out to include the accounts with the highest friend and follower count. There is a distinct bifurcation in the data, two trends emerging at the same time: a) accounts that follow the friend/follower limit coupling and b) accounts that are followed by a lot of others while not following many people themselves. The latter category is obviously celebrity accounts such as David Lynch, Paul Krugman, or Karl Lagerfeld. These brands are simply using Twitter as a one-to-many medium. But what about the first category? A quick examination confirms that these are Internet professionals, mostly from marketing and journalism. These accounts are not built on a transfer of social capital (celebrity status) from the outside, but on continuous cross-platform networking and diligent posting. They have to play by different rules than celebrities, reciprocating follower connections and interacting with other accounts to abide by the tacit rules of the twitterverse. They have built their accounts into mass media as well but had to work hard to get there.

These two examples show how useful data visualization can be in drawing our attention to trends in the data that may be completely invisible when looking at the tables only.

This blogpost is somewhat of an experiment that I hope will turn into a series. I have started to work seriously on a book that will suggest a somewhat different take on understanding computing and particularly contemporary software deployed on the Internet. A large part of that work consists of historical analysis and in this context I am (re)reading many of the seminal papers of the information and computer sciences. What is striking about these texts is not only their content but their far-reaching influence on the landscape of technological concepts and, often enough, on the actual technological developments that followed. Writing software today is in most cases an articulation that takes place in an extremely dense space of established languages, APIs, frameworks, and libraries but also of concepts, methodologies, best practices, tacit assumptions, strategies, and community rules. There is so much “old” in every “new” but many concepts have become so pervasive, so dominant that we no longer see them as the particularities they in fact are. Being canonical, they become second nature. But many of these path-defining moments can be retraced and given the pervasiveness of computers today, an archeology of computing is, in a way, an archeology of our culture.

One of the ways to do such an archeology may simply consist in trying to read seminal computer and information science papers sideways, not (only) as technological proposals, but as political and cultural projects that combine a (most often critical) analysis of a status quo with a prescriptive take on how a more ideal setting could/should look like. Technology is, in that sense, a way of relating to society, a means of contributing that is political in a very different way than the traditional arenas of governance and debate. What I would like to suggest is that this aspect of technological writing (science papers but also reports, RFCs, norms, proposals, documentation, etc.) is by far not examined enough, particularly when it comes to techniques that are related to software. Our view of technology is still very much shaped by the physical machine – the box, the screen, the keyboard – perhaps also because these physical parts are closer to our bodies, more visible and easier to integrate into the cognitive practices of a culture that, paradoxically, is able to produce extremely sophisticated mechanisms while being quite inept when it comes to understanding the role technical objects play in constituting its very fabric.

In my view, the central mistake is to assimilate technology to techné and be done with it. Perhaps I am wrong, but I cannot shake the feeling that very few scholars in the humanities and social sciences are prepared to accord to technological creation the same depth, complexity, variety, the same imbrication in society, the same amount of “humanity” than literature or artistic creation in general. This unwillingness to really engage technology beyond the surface leads to the familiar reflex-like reactions, both positive and negative, that seem to dominate public debates on “hot” topics like social networking, privacy on the Internet, or computer games.

So what I am looking for is a different way of understanding technology that subscribes neither to an engineering perspective concerned with function nor to a purely “culturalist” analysis that sees only imaginaries, symbols, and metaphors, thereby risking to loose the machine in the machine. So, today, first try and why not start with a big one.

In 1970, Edgar F. Codd, a British computer scientist who moved to the US in the 1940s, published one of the most influential papers in the history of computer science, A Relational Model of Data for Large Shared Data Banks (available here, doi:10.1145/362384.362685), in which he proposed a concept for the construction of database systems built around the central idea of separating the logical organization of information from the way it is stored on a physical storage medium. While the usefulness of such a separation may seem very obvious from today’s viewpoint, Codd’s paper stirred a virulent debate and his employer, IBM, was quite reluctant when it came to turning the proposal into a product (it took eight years for the first relational database system to make it to the market). When discussing Codd’s work, we should be very suspicious of the popular narratives of technological development as a series of inventions, or worse, ideas. To separate logical organization from physical storage had been a common practice in libraries for a long time: the library catalogue, in combination with some basic shelf logistics, allows for very different ways of recording books – alphabetically, by subject, and so on. But technologies are not simply ideas; Gene Roddenberry did not invent beaming. As science and technology studies have shown many times, a successful scientific “discovery” or a technological “invention” is somewhat of a “perfect storm”: many pieces have to fall into place, many different actors have to be mobilized, and most often there is talking, writing, demonstrating, debating, and a whole lot of fuzz. As computer history shows, having an idea (Babbage) or even building a functioning machine (Zuse) may simply not be enough to establish a technology. Since the industrial revolution, technologies are increasingly often systems that require logistics, markets, organizational reform, or an installed user base. In our case, the really interesting thing is not necessarily the abstract idea for what has become today’s omnipresent relational database, but the way Codd builds an idea into a technological concept, as an argument as well as a potential system. To start, let’s quote the abstract in full:

Future users of large data banks must be protected from having to know how the data is organized in the machine (the internal representation). A prompting service which supplies such information is not a satisfactory solution. Activities of users at terminals and most application programs should remain unaffected when the internal representation of data is changed and even when some aspects of the external representation are changed. Changes in data representation will often be needed as a result of changes in query, update, and report traffic and natural growth in the types of stored information.

Existing noninferential, formatted data systems provide users with tree-structured files or slightly more general network models of the data. In Section 1, inadequacies of these models are discussed. A model based on n-ary relations, a normal form for data base relations, and the concept of a universal data sublanguage are introduced. In Section 2, certain operations on relations (other than logical inference) are discussed and applied to the problems of redundancy and consistency in the user’s model. (p. 377)

First of all, who are these users that have to be “protected”? In 1970, this is obviously not (yet) the manager sitting in front of a screen and keyboard but rather the application programmer that will implement the “query, update, and report” functions every larger organizations rely on for management. These users/programmers had been forced to make changes in storage structures whenever requirements changed in a significant way. This was not just an onerous task but also a source of potentially crippling problems as every adaptation risked breaking existing applications. Without explicit reference, Codd’s work is directly related to what has become to be known as the “software crisis” that lead to the emergence of software engineering. The separation of systems into black-boxed modules that communicated via well-specified interfaces was one of the solutions put forward to counter the explosion of complexity that followed the introduction of computers into large-scale, real-world (business) organizations. Seen in this light, the relational model and the concept of “data independence” (p. 377) is an extremely powerful agent for the division of labor that cleanly separates the engineering of a database system from the specification of data structures, adding to the ground work for the concept of end-user software that we know today.

So what is Codd’s proposal? For a reader trained in the humanities trying to read a paper like the one in question (even the first half, which does not use any formal notation), adaptions to the habitual reading style have to be made to get something useful out of it. Much like mathematics, computer science deploys language quite differently than the humanities (except for analytical philosophy): language, here, is not (only) narrative and argumentative, it aims a building a demonstration, which is most certainly a rhetorical form, but a very formal one that follows a convention consisting of laying out a space of thinking through a series of very precise definitions, which often attribute quite specific significations to words taken from everyday language. Miss one of these definitions and the whole pyramid crumbles. In Codd’s case, the basic building block is the concept of relation (taken from mathemataical set theory, like most reasoning about databases), which designates a basic form for structuring data where every abstract entity is composed of a series of attributes. This data structure can be “filled” with entries (rows). If you’re familiar with SQL (today’s standard query language, derivative of Codd’s work), relation (or rather relationship, the unordered version of relation in Codd’s paper; nowadays, relation is used for Codd’s relationship and I’ll follow that convention) is simply the structure of a table. In practice, Codd suggest to build databases that represent all data in a from that looks like this:

students: name email major

Jack jack@email.com history

Mary mary@email.com science

Here, students is a relation composed of three attributes (name, email, number). Jack is a row (entry), Mary is another one. What was new in this definition is obviously not the notion of the table, but rather the idea to define a relation as a purely abstract and unordered structure, a logical construct that did not specify in any way how it was to be stored on a physical medium. An important indicator for this decoupling is Codd’s comment that “the ordering of rows is immaterial” (p. 379). Without stating it explicitly, Codd shifts the construction of order from the storage to the query. More on this later.

The second key concept is the notion of primary key and its corollary, the foreign key. Let’s add a primary key to our table:

students: key name email major

1 Jack jack@email.com history

2 Mary mary@email.com science

The primary key is a way of addressing a row of data unambiguously (student #1 is Jack and no other student, keys have to be unique). The idea of a foreign key means to simply use a primary key in another table. Instead of doubling information (which may lead to all kinds of update problems as well as storage overhead), we’re simply “pointing” from one table to another. Take the relation (table) “grades”:

grades: student.id english history geography

1 C C C

2 B B B

In this case, students.id (relation.attribute is the notation we still use today) is the foreign key linking to the primary key of our “students” relation. In practice this means that Jack had all Cs and Mary all Bs in the three classes they took. Codd shows that using this concept of primary/foreign key, very complex organizations of data can be produced while keeping the basic principles very simple. While both of the dominant models of the time, the tree and network models, were based on data hierarchies (that had to be rebuilt if informational practices changed), the relational model is much more flexible.

To put things into perspective: most of the world’s structured data is currently organized according to this basic form. I would guess that despite the current NoSQL hype (companies like Google and Facebook use even simpler and highly customized data structures for ultra-high speed access) more than 90% of all Web applications have a database backend based on one of the many implementations of the relational model, e.g. Oracle, MS SQL Server, MySQL, PostgreSQL, to name just a few. But data organization is only the first half of the proposal.

The next step in Codd’s paper is to reflect on a language that would allow for data retrieval and manipulation by addressing the logical organization of the data rather than its physical storage. Rather than specifying the physical location of the data, saying “I want the entries from address 0x00000 to address 0xfffff” (and we would have to know these addresses beforehand!), we could simply ask for all the entries in the table students. Remember that above, I indicated that Codd declared entry order as “immaterial”? This is because the ordering of data is no longer (merely) a property of the archive. Ordering is done in the language we use to get the data: “I want all the students, sorted alphabetically by name” (SQL: SELECT * FROM students ORDER BY name). The data structure has of course be prepared for the kind of queries we will want to make, but in our example, I could group my list by major, sort it by email, or, by “joining” our two tables, order by grade average. More elaborate queries would allow me to select the 25% percent students with the best grade average or to plot the grade evolution over the years if I have that data.

A data retrieval and manipulation language would have to do more than just query and this quote summarizes the requirements:

A set so specified may be fetched for query purposes only, or it may be held for possible changes. Insertions take the form of adding new elements to declared relations without regard to any ordering that may be present in their machine representation. Deletions which are effective for the community (as opposed to the individual user or sub- communities) take the form of removing elements from declared relations. (p. 382)

These are the four building blocks of every database system I have worked with (again using SQL): SELECT (query a database using different parameters for searching and ordering, e.g. get all students with a certain grade average), INSERT (insert new data into a table, e.g. add a new student into students), UPDATE (change data, e.g. change a student’s grade after accepting a bribe), DELETE (erase date, e.g. expel a student for offering you a bribe). Such a language – Codd will propose the Alpha language in the 1970s but IBMs SQL (structured query language; Larry Ellison of Oracle actually was the first to bring a SQL based product to the market and consequently became one of the richest people on the planet) largely won out – would again “protect” the user from having to interact with anything but the data organization specified in the terms of the relational model.

In the rest of the paper, Codd tackles a series of problems that could arise in the implementation of actual systems (and what we would call a “storage engine” today) based on the relational model, but this part is less interesting for my purposes.

I would like, however, to propose a couple of comments that may help putting things into a larger perspective:

1) The central critique of Codd’s proposals came from programmers and engineers that abhorred the loss of control (an potentially performance) over the actual organization of data storage on the physical medium and the dangers such a black-boxing may pose to data integrity in the case of dysfunction or accident. But in the 1980s the demands for more flexibility and cost control won the day, driven by lower hardware costs and better techniques for securing data. This evolution towards layering, modularity, and a general “abstraction” from the hardware has happened in all fields of computing and, indeed, the loss of control and visibility is most often the prime concern. In a sense, software has followed a similar trajectory as social organization, from community to society (and back, whenever there is a new frontier to homestead), that is from small-scale teams and organizations to the large-scale efforts of companies like Microsoft or Oracle. Abstraction techniques like Codd’s played a central role here as enablers of division of labor. It also permitted – and this is crucial – a much tighter integration between management processes and information technology. The moment information structures are “liberated” from questions of physical storage, they can be implemented in flexible, end-user friendly software packages, which makes it possible for management to interact much more directly with data. The rise of Business Intelligence and Decision Support Systems would have been much less spectacular without the relational model turning “information” into the malleable material it has become.

2) While I am of course tempted to write something like “The decoupling of the logical structure of data from physical storage and the immense power and flexibility afforded by query languages have led to the emergence of late-modern network economies.”, this would be too quick and easy. The relational database, the powerful query languages, and the business control and intelligence functions they enable are certainly a central part of the informational infrastructure that supports contemporary economic organization. Data, once collected, can be interrogated from every possible angle and automatic reporting (which is no more than a series of very elaborate SQL queries over a large number of tables) has introduced incredible speed into business processes, while keeping up an illusion of control. Illusion, because just like any formal model of reality, data and query models are necessarily reductionist. At the same time, databases are themselves part of a much longer trend in management that started with systems management in the late 19th century. We’re snowballing from one information age to the next and technologies like the relational model are as much enablers as results, causes and effects.

3) The relational database is part of a much larger transformation in how documents, information, and knowledge are handled. From the library catalog to documentation centers and further on to data banks, information retrieval, and data mining, we see a steady growth in the attention being payed to the logistics, organization, and “exploitation” of an always faster growing mountain of texts, images, sounds, and so forth. The relational model not only helps with classic tasks such as storage and retrieval, it shares in the birth of the what could be called the “automated production of knowledge”, i.e. the creation of new information from cross-referencing, comparing, statistically examining, synthesizing, and representing large quantities of information. Whether these automated processes (think reporting, data mining, etc.) produce “real” knowledge is a rather stale question; it is much more important to emphasize how businesses and other organizations have come to depend on these tools for everyday management and decision-making. Query languages built on Codd’s proposal constitute the foundation for these developments.

There would be much more to say about Codd’s work and the relational database but I want to close by going back to the initial question about reading computer science from a humanities perspective. A classic analysis of language and use of metaphors would probably have proceeded quite differently and would have homed in on things like the “protection” of users or citations such as this footnote:

Naturally, as with any data put into and retrieved from a computer system, the user will normally make far more effective use of the data if he is aware of its meaning. (p. 380)

Imaginaries are indeed important aspects of an archeology of computing but even in written form, computer science is, in a way, always looking elsewhere, beyond the text, and Codd points to this “elsewhere” in his last paragraph:

Nevertheless, the material presented should be adequate for experienced systems programmers to visualize several approaches. (p. 387)

What Codd asks the reader to visualize is the laboratory of computer science, the site where things come together, the working system. While the discursive aspects are certainly important, I feel that function is central to the poetics of the technical sciences and if we want to understand their cultural significance we have to read them both as texts and as functional blueprints.

If we want to understand the plethora of very specific roles computers play in today’s world, the question “What is software?” is inevitable. Many different answers have been articulated from different viewpoints and different positions – creator, user, enterprise, etc. – in the networks of practices that surround digital objects. From a scholarly perspective, the question is often tied to another one, “Where does software come from?”, and is connected to a history of mathematical thought and the will/pressure/need to mechanize calculation. There we learn for example that the term “algorithm” is derived from the name of the Persian mathematician al-Khwārizmī and that in mathematical textbooks from the middle ages, the term algorism is used to denote the basic arithmetic techniques – that we now learn in grammar school – which break down e.g. the calculation of a multiplication with large numbers into a series of smaller operations. We learn first about Pascal, Babbage, and Lady Lovelace and then about Hilbert, Gödel, and Turing, about the calculation of projectile trajectories, about cryptography, the halt-problem, and the lambda calculus. The heroic history of bold pioneers driven by an uncompromising vision continues into the PC (Engelbart, Kay, the Steves, etc.) and Network (Engelbart again, Cerf, Berners-Lee, etc.) eras. These trajectories of successive invention (mixed with a sometimes exaggerated emphasis on elements from the arsenal of “identity politics”, counter-culture, hacker ethos, etc.) are an integral part for answering our twin question, but they are not enough.

A second strand of inquiry has developed in the slipstream of the monumental work by economic historian Alfred Chandler Jr. (The Visible Hand) who placed the birth of computers and software in the flux of larger developments like industrialization (and particularly the emergence of the large scale enterprise in the late 19th century), bureaucratization, (systems) management, and the general history of modern capitalism. The books by James Beniger (The Control Revolution), JoAnne Yates (Control through Communication and more recently Structuring the Information Age), James W. Cortada (most notably The Digital Hand in three Volumes), and others deepened the economic perspective while Paul N. Edwards’ Closed World or Jon Agar’s The Government Machine look more closely at the entanglements between computers and government (bureaucracy). While these works supply a much needed corrective to the heroic accounts mentioned above, they rarely go beyond the 1960s and do not aim at understanding the specifics of computer technology and software beyond their capacity to increase efficiency and control in information-rich settings (I have not yet read Martin Campell-Kelly’s From Airline Reservations to Sonic the Hedgehog, the title is a downer but I’m really curious about the book).

Lev Manovich’s Language of New Media is perhaps the most visible work of a third “school”, where computers (equipped with GUIs) are seen as media born from cinema and other analogue technologies of representation (remember Computers as Theatre?). Clustering around an illustrious theoretical neighborhood populated by McLuhan, Metz, Barthes, and many others, these works used to dominate the “XY studies” landscape of the 90s and early 00s before all the excitement went to Web 2.0, participation, amateur culture, and so on. This last group could be seen as a fourth strand but people like Clay Shirky and Yochai Benkler focus so strongly on discontinuity that the question of historical filiation is simply not relevant to their intellectual project. History is there to be baffled by both present and future.

This list could go on, but I do not want to simply inventory work on computers and software but to make the following point: there is a pronounced difference between the questions “What is software?” and “What is today’s software?”. While the first one is relevant to computational theory, software engineering, analytical philosophy, and (curiously) cognitive science, there is no direct line from universal Turing machines to our particular landscape with the millions of specific programs written every year. Digital technology is so ubiquitous that the history of computing is caught up with nearly every aspect of the development of western societies over the last 150 years. Bureaucratization, mass-communication, globalization, artistic avant-garde movements, transformations in the organization of labor, expert movements in public administrations, big science, library classifications, the emergence of statistics, minority struggles, two world wars and too many smaller conflicts to count, accounting procedures, stock markets and the financial crisis, politics from fascism to participatory democracy,… – all of these elements can be examined in connection with computing, shaping the tools and being shaped by them in return. I am starting to believe that for the humanities scholar or the social scientist the question “What is software?” is only slightly less daunting than “What is culture?” or “What is society?”. One thing seems sure: we can no longer pretend to answer the latter two questions without bumping into the first one. The problem for the author, then, becomes to choose the relevant strands, to untangle the mess.

In my view, there is a case to be made for a closer look at the role the library and information sciences played in the development of contemporary software techniques, most obviously on the Internet, by not exclusively. While Bush’s Memex has perhaps been commented on somewhat beyond its actual relevance, the work done by people such as Eugene Garfield (citation analysis), Calvin M. Mooers (information retrieval), Hans-Peter Luhn (KWIC), Edgar Codd (relational database) or Gerard Salton (the vector space model) from the 1950s on has not been worked on much outside of specialist circles – despite the fact that our current ways of working with information (yes, this includes your Facebook profile, everything Google is doing, cloud computing, mobile applications and all the other cool stuff Wired writes about) have left behind the logic of the library catalog quite some time ago. This is also where today’s software comes from.

When talking about the politics of the social Web and particularly online networking, the first issue coming up is invariably the question of privacy and its counterpart, surveillance – big brother, corporations bent on world dominance, and so on. My gut reaction has always been “yeah, but there’s a lot more to it than that” and on this blog (and hopefully a book in a not so far future) I’ve been trying to sort out some of the political issues that do not pertain to surveillance. For me, social networking platforms are more relevant to politics as marketing rather than surveillance. Not that these tools cannot function quite formidably to spy on people, but it is my impression that contemporary governance relies on other principles more than the gathering of intelligence about individual citizens (although it does, too). But I’ve never been very pleased with most of the conceptualizations of “post-disciplinarian” mechanisms of power, even Deleuze’s Post-scriptum sur les sociétés de contrôle, although full of remarkable leads, does not provide a fleshed-out theoretical tool – and it does not fit well with recent developments in the Internet domain.

But then, a couple of days ago I finally started to read the lectures Foucault gave at the Collège de France between 1971 and 1984. In the 1977-1978 term the topic of that class was “Sécurité, Territoire, Population” (STP, Gallimard, 2004) and it holds, in my view, the key to a quite different perspective on how social networking platforms can be thought of as tools of governance involved in specific mechanisms of power.

STP can be seen as both an extension and reevaluation of Foucault’s earlier work on the transition from punishment to discipline as central form in the exercise of power, around the end of the 18th century. The establishing of “good practice” is central to the notion of discipline and disciplinary settings such as schools, prisons or hospitals serve most of all as means for instilling these “good practices” into their subjects. Jeremy Bentham’s Panopticon – a prison architecture that allows a single guard to observe a large population of inmates from a central control point – has in a sense become the metaphor for a technology of power that, in Foucault’s view, is part of a much more complex arrangement of how sovereignty can be performed. Many a blogpost has been dedicated to applying the concept on social networking online.

Curiously though, in STP, Foucault calls the Panopticon both modern and archaic, and he goes as far as dismissing it as the defining element of the modern mechanics of power; in fact, the whole course is organized around the introduction of a third logic of governance besides (and historically following) “punishment” and “discipline”, which he calls “security”. This third regime is no longer focusing on the individual as subject that has to be punished or disciplined but on a new entity, a statistical representation of all individuals, namely the population. The logic of security, in a sense, gives up on the idea of producing a perfect status quo by reforming individuals and begins to focus on the management on averages, acceptable margins, and homeostasis. With the development of the social sciences, society is perceived as a “natural” phenomenon in the sense that it has its own rules and mechanisms that cannot be so easily bent into shape by disciplinary reform of the individual. Contemporary mechanisms of power are, then, not so much based on the formatting of individuals according to good practices but rather on the management of the many subsystems (economy, technology, public health, etc.) that affect a population so that this population will refrain from starting a revolution. Foucault actually comes pretty close to what Ulrich Beck’s will call, eight years later, the Risk Society. The sovereign (Foucault speaks increasingly of “government”) assures its political survival no longer primarily through punishment and discipline but by managing risk by means of scientific arrangements of security. This not only means external risk, but also risk produced by imbalance in the corps social itself.

I would argue that this opens another way of thinking about social networking platforms in political terms. First, we would look at something like Facebook in terms of population not in terms of the individual. I would argue that governmental structures and commercial companies are only in rare cases interested in the doings of individuals – their business is with statistical representations of populations because this is the level contemporary mechanisms of power (governance as opinion management, market intelligence, cultural industries, etc.) preferably operate on. And second – and this really is a very nasty challenge indeed – we would probably have to give up on locating power in specific subsystems (say, information and communication systems) and trace the interplay between the many different layers that compose contemporary society.

The term “determine” is often used rather lightly by those who write about the political dimension of technology. At the same time the accusation of “technological determinism” – albeit sometimes right on target – is being used as a means to exclude discussion of technological parameters from the humanities and the social sciences. But what is actually meant by “technological determinism”? In my view, there are three basic forms of thinking about determinism when it comes to technology:

The first is very much connected to French anthropologist André Leroi-Gourhan and holds that technological evolution is largely self-determined. His notion of “tendance technique” takes its inspiration from evolutionary theory in the sense that the technology evolves blindly but following the paths carved out by the “choices” made throughout its phylogenesis (this has been called “cumulative causation” or “path dependency” by some). Leroi-Gourhan’s perspective has been developed further by Deleuze and Guattari in their concept of “phylum” and, most notably, by philosopher of technology Gilbert Simendon (who’s work is finally going to be translated into English, hopefully still in 2008) who sees the process of technological evolution as “concretization”, going from modular designs to always more integrated forms. “Technological determinism” would mean, in this first sense, that technology is not the result of social, economic, or cultural process but largely independent, forcing the other sectors to adapt. Technology is determined by its inner logic.

A more colloquial meaning of technological determinism is, of course, connected to the

- Look at design studies where determinism has been replaced by the quite elegant notion of affordance.

- Read more Actor-Network Theory.

- Think about what Roland Barthes meant by “interpretation”.

- Dust off your copy of Hall’s “encoding/decoding”.

- Work as a software developer and marvel at the infinity of ways users find to use, appropriate, and break your applications.