There are many different ways of making sense of large datasets. Using network visualization is one of them. But what is a network? Or rather, which aspects of a dataset do we want to explore as a network? Even social services like Twitter can be graphed in many different ways. Friend/follower connections are an obvious choice, but retweets and mentions can be used as well to establish links between accounts. Hashtag similarity (two users who share a tag are connected, the more they share, the closer) is yet another method. In fact, when we shift from interactions to co-occurrences, many different things become possible. Instead of mapping user accounts, we can, for example, map hashtags: two tags are connected if they appear in the same tweet and the number of co-occurrences defines link strength (or “edge weight”). The Mapping Online Publics project has more ideas on this question, including mapping over time.

In the context of the IPRI research project we have been following 25K Twitter accounts from the French twittersphere. Here is a map (size: occurrence count / color: degree / layout: gephi with OpenOrd) of the hashtag co-occurrences for the 10.000 hashtags used most often between February 15 2011 and April 15 2011 (clicking on the image gets you the full map, 5MB):

The main topics over this period were the regional elections (“cantonales”) and the Arab spring, particularly the events in Libya. The japan earthquake is also very prominent. But you’ll also find smaller events leaving their traces, e.g. star designer Galliano’s antisemitic remarks in a Paris restaurant. Large parts of the map show ongoing topics, cinema, sports, general geekery, and so forth. While not exhaustive, this map is currently helping us to understand which topics are actually “inside” our dataset. This is exploratory data analysis at work: rather than confirming a hypothesis, maps like this can help us get a general understanding of what we’re dealing with and then formulate more precise questions from there.

In August 2010, Edinburgh Sociologist Donald MacKenzie (whose book An Engine, not a Camera is an outstanding piece of scholarship) wrote an article in the Financial Times titled Unlocking the Language of Structured Securities where he discusses a software suite for financial analysis called Intex and compares it to a language that allows to see and interact with the world in certain ways rather than others. MacKenzie describes his first encounter with Intex as a moment of revelation that quickly turned into doubt:

The psychological effect was striking: for the first time, I felt I could understand mortgage-backed securities. Of course, my new-found confidence was spurious. The reliability of Intex’s output depends entirely on the validity of the user’s assumptions about prepayment, default and severity. Nevertheless, it is interesting to speculate whether some of the pre-crisis vogue for mortgage-backed securities resulted from having a system that enabled neophytes such as myself to feel they understood them.

While MacKenzie does not go as far as imputing the recent financial crisis to a piece of software, he points out that Intex is not recursive in its mode of analysis: when evaluating a complex financial asset, for example one of the now (in)famous CDOs that are made up of other assets, themselves combining further values, and so on, Intex does not follow the trail down to the basic entities (the individual mortgage) but calculates risk only from the rating of the asset in question. MacKenzie argues that Goldman-Sachs’ 2006 decision to basically get out of mortgage-based securities may well be a result of their commitment to go beyond available tools by implementing a (very costly) “bottom-up” approach that builds its evaluation of an asset by calculating up from the basic units of value. The card-house character of these financial instruments could become visible by changing tools and thereby changing perspective or language. Software makes it possible to implement very different practices or languages and to make them pervasive; but how does a company chose one strategy over another? What are the organizational and “cultural” factors that lead Goldman-Sachs to change its approach? These may be the truly challenging questions here, although they may never get answered. But they lead to a methodological lesson.

The particular strength of systems like Intex lies in their capacity to black-box evaluation strategies behind a neat interface that allows users to immediately operate on the underlying models, weaving these models into their decisions and practices. Conceptually, we understand the ways in which software shapes action better and better but the empirical complexity of concrete settings is positively daunting even outside of the realm of financial markets. What I take from MacKenzie’s work is that in order to understand the role of software, we have to be very familiar with the specific terrain a system is embedded in, instead of bringing overarching assumptions to the table. Software is a means for building structure and this building is always happening in particular organizational settings that are certainly caught up in larger trends but also full of local challenges, politics, and knowledge. Programs are at the same time structuring backdrop practice and part of a strategic repertoire that actors dispose of.

The case of financial software indicates that market behavior standardizes around available tools which leads to the systemic delegation of certain decision processes to software makers. This may result in a particular type of herd behavior and potentially in imbalance and crisis. Somewhat ironically, it is Goldman-Sachs that showed the potential of going against the grain by questioning programmed wisdom. That the company recently paid $550M in fines for abusing their analytical advantage by betting against a CDO they were selling to customers as an investment indicates that ethics and cunning are unfortunately two pair of shoes…

When Lawrence Lessig famously stated that “code is law”, the most simple and striking example was AOL’s decision to – arbitrarily – limit the number of people that could log into a chat room at the same time to 23. While the social consequences of this rule were quite far-reaching, they could be traced to a simple line of text somewhere in a script stating that “limit = 23;” (apparently someone changed that to “limit = 36;” a bit later).

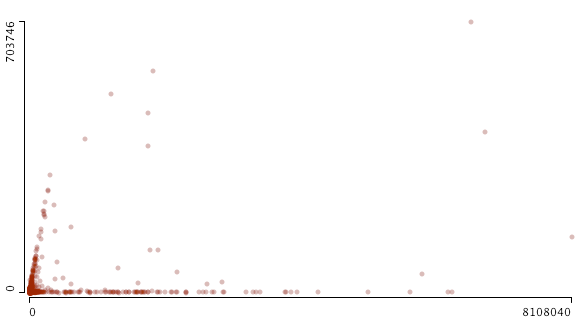

When starting to work on a data exploration project linking Web sites to Twitter, I wasn’t aware that the microblogging site had similar limitations built in. Somewhere in 2008, Twitter apparently capped the number of people one can follow to 2000. I stumbled over this limit by accident when graphing friends and followers for the 24K+ accounts we are following for our project:

This scatterplot (made with Mondrian, x: followers / y: friends) shows the cutoff quite well but it also indicates that things are a bit more complicated than “limit = 2000;”. From looking at the data, it seems that a) beyond 2000, the friend limit is directly related to the number of followers an account has and b) some accounts are exempt from the limit. Just like everywhere else, there are exceptions to the rule and “all are equal before the law” (UN Declaration of Human Rights) is a standard that does not apply in the context of a private service.

While programmed rules and limits play an important role in structuring possibilities for communication and exchange, a second graph indicates that social dynamics leave their traces as well:

This is the same data but zoomed out to include the accounts with the highest friend and follower count. There is a distinct bifurcation in the data, two trends emerging at the same time: a) accounts that follow the friend/follower limit coupling and b) accounts that are followed by a lot of others while not following many people themselves. The latter category is obviously celebrity accounts such as David Lynch, Paul Krugman, or Karl Lagerfeld. These brands are simply using Twitter as a one-to-many medium. But what about the first category? A quick examination confirms that these are Internet professionals, mostly from marketing and journalism. These accounts are not built on a transfer of social capital (celebrity status) from the outside, but on continuous cross-platform networking and diligent posting. They have to play by different rules than celebrities, reciprocating follower connections and interacting with other accounts to abide by the tacit rules of the twitterverse. They have built their accounts into mass media as well but had to work hard to get there.

These two examples show how useful data visualization can be in drawing our attention to trends in the data that may be completely invisible when looking at the tables only.

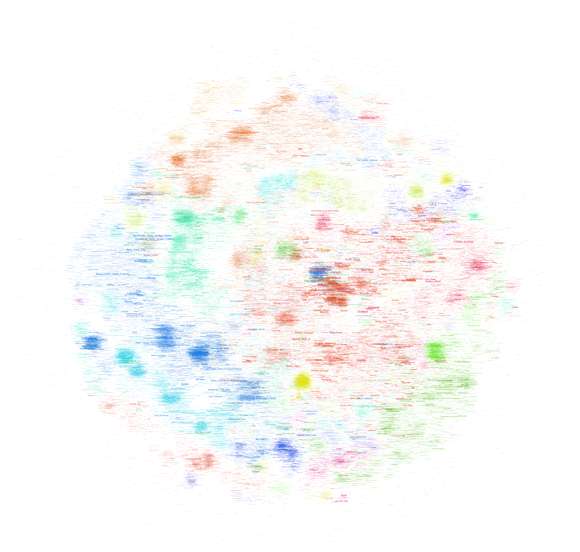

After trying to map the French version of Wikipedia a couple of days ago, I’ve played around with the much bigger English version (the dbpedia file I worked with contains 130M links between Wikipedia pages in a cool 20GB) this week-end and thanks to a rare lucid moment I was able to transform that thing into a .gdf that is small enough to be opened in gephi. I settled for the 45K pages with the most links (undirected) and started mapping. All three maps I built use the OpenOrd layout algorithm (1000 iterations). The first uses the modularity measure for “community” detection and colors text accordingly (click on the image for a very large version):

The second uses a grey color scale to express the degree (number of links) of a page:

Finally, the same map, but with a different color scale (light blue => yellow => red):

Every version helps with certain readability issues and you can download all tree of the maps as a big .psd so you can easily switch between the different modes.

When comparing these maps with their French counterpart, there are several things than are quite remarkable:

- Most importantly, there is no cluster that I would qualify as “common culture” or “shared knowledge”. There is most certainly a large, dense zone at the center but while the French one draws in all kinds of topics, this version has worldwide country information only. I would prudently argue that the English version of Wikipedia shows a more globalized picture of the world, even if there is a large zone of pages on the left that deals with the United States. It’s a bigger and more heterogeneous world that emerges, but there still is a dominant player.

- Sports is even bigger on the English version and typically American sports (Baseball, NASCAR, etc.) show up on the left in smaller, denser clusters compared to the gigantic football (soccer) area on the center to bottom right.

- The Sciences are smaller but entertainment (TV, popular music, comic books, video games, etc.) is much more present. At least at this level of observation.

- There are some seriously “strange” clusters, such as the dense yellow zone on the far right halfway between top and center that shows a group of Russian painters I have never heard of. Not that I’m an expert but I’ve found little trace of any other painters. This shows the weakness of my selection method by link degree – if there was a way to select nodes by page-views, the results would probably be very different, at least for our Russian painters. But it also shows that despite having become a rather respectable Encyclopedia with a quite classic subject outlook, Wikipedia still is a space for off-the-track topics and for communities that are so passionate about a certain subject that they will groom it and grow it.

I plan on releasing the scripts used to build these maps in the future but I want to try out a couple more things before that, most particularly a version that only takes into account in-links, which should reduce the presence of certain “distributor” pages (“events in 2010″,”people alive”, etc.).

Edit: a map of the English Wikipedia is here.

Wikipedia is a fascinating object for way too many reasons. The way it is produced, the place it has taken in society, it’s size and evolution, and many other aspects are truly remarkable. Studying Wikipedia has become a discipline in itself and while there may be certain signs of fatigue on the editing front, there is still much to learn and to discover. I have recently started to take an interest in looking at the way knowledge is structured in different contexts and the availability of certain tools and datasets makes Wikipedia a perfect object for scrutiny. If it just wasn’t that big. Still, it’s the 21st century and computers are getting really fast, so why not try mapping Wikipedia. All of it.

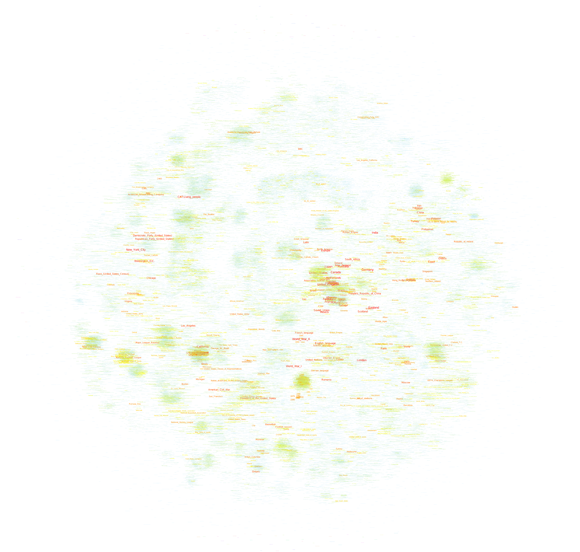

There are different ways to start such a project, but simply taking the link structure is probably the most obvious. This allows for bypassing the internal taxonomy and may lead to a more “organic” expression of underlying knowledge structures. Unfortunately, computers are not that fast – at least not mine – and so I had to make two concessions: I took a non English variant (I settled for French) and reduced the number of nodes to a (barely) manageable amount. The final graph file (.gdf – do not even think about working with it with less than 4GB of RAM) was built by taking pages that had at least 100 connections with other pages. From an initial 183K pages and 11.5M links I went down to a more manageable 40K and 2M respectively. To make things workable, I chose to visualize the page names only, no nodes, no edges. The result looks like this (click on the image for a very big .png):

Reliable gephi did not only do the graph layout (OpenOrd plugin, 1000 iterations) but dutifully detected “communities” in the network, which actually did work really well. And here is a version in elegant grayscale, this time without community detection:

The graph shows a big dense zone in the middle that is quite unreadable but composed out of world history, politics, geography, and other elements that constitute a core set of knowledge elements that are highly interlinked. While France plays and important role here, these elements are actually very globalized and include countries from all over the world. Could we interpret this as a field of “common” or “shared” knowledge? A set of topics that transcend specialization and form the very core of what our culture considers essential?

To the close right of the very center, there is a rather visible (in orange) cluster on the United States. Around the center you’ll find major historic events and periods (WWII, middle ages, renaissance, etc.). The arts are on the right (mostly music) and France’s most popular art form – Cinema – starts at the top right, in a highly dense orange cluster and goes to the top left, tellingly fusing with theatre. The Sciences form a rather strange blue band the goes from the center top to the top right.

And then there is sports. I was a bit surprised by how much of it there is and how well the clustering and community detection works for identifying individual fields – football, tennis, car racing, and so on. The second surprise was how few “geek” subjects appear on the map. There is a digital technology cluster on the top right but I haven’t found any traces of the legendary Star Trek cluster. In the end, French Wikipedia appears to be a rather classic encyclopedia if you look at it from a subject angle. Could we use such maps to compare subject prominence between cultures?

Obviously, the method for mapping Wikipedia has to be refined to make maps more readable but the results are actually already quite telling. Let’s see whether the same approach can work for the English version – which is a cool 10 times bigger…

After having sparked a series of revolutions mostly on it’s own – socioeconomics is a thing of the 20th century anyways – Twitter is looking to finally make some money off that society-changing prowess. One of the steps in that direction are the new regulations for developers, or rather, the new regulations for those who want to develop a Twitter app but are no longer welcome to do so. As this Ars Technica piece describes, apps that provide similar features as Twitter applications are no longer allowed; existing programs will be allowed to linger on, but new ones will be blocked. Ars cites a mail by developer Steve Streza on the twitter-dev mailing-list, here in full:

Twitter continues to make hostile and aggressive moves to alienate the third-party developers who helped make it the platform it is now. Today it’s third party Twitter clients. Tomorrow it’ll be URL shorteners and image/video hosts. Next it’ll be analytics and ads and who knows what else. Maybe you guys should spend some time improving the core of the service (uptime, reliability, bug fixes, etc.) rather than ingressing on the work of the thousands of developers who made Twitter an exciting place to be.

The story itself is not new. APIs are a great way for a company to experiment with new features and ideas without having to take any major risks themselves. Google led the way with Google Maps, slowly adding features to its service that had been pioneered by third party developers and deemed viable by users. Legally, there is not much to do about these practices (it they want to, companies can simply close down their web services, too) and it’s quite understandable that Twitter wants to control a value chain that promises to be quite profitable in the end. But for users and developers the reliance on private companies and closed systems is a big risk indeed. I’ve been working on a research project using Twitter data for over a year and while everything seems to be OK for the moment, what if our team suddenly gets locked out? Hundreds of hours down the drain?

When using proprietary services, you should be prepared for such things to happen but when I look at the role Twitter did play in recent events in North Africa and the Middle East – it was a mayor conduit after all – and I think about that one company’s (well, there’s Facebook, too) ability to simply close the pipes, I can’t help but feel worried. While the Internet was presented as a herald of decentralization, its global span has actually allowed for a concentration and system lock-in that is quite unique in the history of communication.

I think I’m just going to stick to email after all…

While there are probably a lot of people that have stumbled over the Google Ngram Viewer, it is safe to assume that fewer have read the paper (Science, January 2011) by Michel et al. that documents the project and gives a good idea of the kind of “big iron” science we can expect to capture quite a lot of attention over the next couple of years. According to the (14, one being “The Google Books Team”, another Steven Pinker) authors, the projet – fittingly termed culturomics – is based on a sample of 5,195,769 books, which apparently represents roughly 4% of all the books ever published. They easiest way to show the scope of what the researchers aim to do is quoting the abstract in full:

We constructed a corpus of digitized texts containing about 4% of all books ever printed. Analysis of this corpus enables us to investigate cultural trends quantitatively. We survey the vast terrain of ‘culturomics,’ focusing on linguistic and cultural phenomena that were reflected in the English language between 1800 and 2000. We show how this approach can provide insights about fields as diverse as lexicography, the evolution of grammar, collective memory, the adoption of technology, the pursuit of fame, censorship, and historical epidemiology. Culturomics extends the boundaries of rigorous quantitative inquiry to a wide array of new phenomena spanning the social sciences and the humanities.

Next to the sheer size of the corpus, there are several things that are quite remarkable with this project:

1) While the paper is full of graphs, it is immensely interesting that many of the measurements taken can be “reenacted” with the Ngram Viewer. In a passage that diagnoses “a greater focus on the present” in more recent publications, the authors show that the half-life (i.e. the number of years it takes for a date to get to half the frequency value of an initial peak) of dates gets much shorter over time. We can easily graph the result ourselves: This possibility to query the data ourselves (as well as the comprehensive data sharing) represents quite a change in how we can relate to the results as scholars and while only the most well-funded projects will be able to provide a “companion” data-tool, there is a real epistemological shift underway. From a teaching perspective, the hands-on approach may actually be even more valuable.

This possibility to query the data ourselves (as well as the comprehensive data sharing) represents quite a change in how we can relate to the results as scholars and while only the most well-funded projects will be able to provide a “companion” data-tool, there is a real epistemological shift underway. From a teaching perspective, the hands-on approach may actually be even more valuable.

2) We increasingly have very comprehensive available data sets that can be used as concept markers in very different contexts. In this case, the authors used 740.000 names of persons from Wikipedia to study different aspects of fame. But one could easily imagine using GeoNames to perform a similar survey of the ebb and fall of geographic prominence. I am quite sure that linguists will soon bring together the Ngram data with WordNet to study concept evolution and other things.

3) While the examples developed in the article are fascinating – and there will certainly be many more – the epistemological horizon is quite vague for the moment. There is no question that historical linguistics will have a field day plunging into the data, but the intellectual rationale behind the project of culturomics is a bit thin for the moment:

Culturomics is the application of high-throughput data collection and analysis to the study of human culture. Books are a beginning, but we must also incorporate newspapers, manuscripts, maps, artwork, and a myriad of other human creations. Of course, many voices—already lost to time— lie forever beyond our reach.

Culturomic results are a new type of evidence in the humanities. As with fossils of ancient creatures, the challenge of culturomics lies in the interpretation of this evidence.

I would argue that it is not so much the interpretation of evidence that represents a challenge but the integration of these new computer-based approaches into meaningful research agendas that ask non-trivial questions. While it may be interesting to be able to attach a number to the competence of Nazi censorship efforts, this competence was never very much in doubt and while numbers and graphs may confer an aura of scientific respectability, the findings will most probably not add anything to our understanding of national socialism.

While it is increasingly unpopular to cite Snow’s Two Cultures, this early proposal for a quantitative approach to culture (in its historic dimension) will give rise to all kinds of polemics, misunderstandings, and demarkation efforts. The public availability of a query tool is, however, a real reason for hope: humanities scholars will be able to try it out for themselves and with a bit of luck, we will have a broader view on its usefulness for cultural analysis in a couple of month.

The digital methods initiative at the University of Amsterdam – incidentally my new employer – has an ever growing list of very useful tools that help with studying online phenomena. The Wikipedia Network Analysis tool (like most DMI software written by Eric Borra) is particularly interesting if we simply take into account the place of Wikipedia in our contemporary knowledge configurations. The tool crawls Wikipedia from a starting URL (by default at a +2 radius) and – amongst other things – spits out a source node / target node list of links between the different pages.

To visualize the data, you can use Many Eyes but there are significant limits to woking with online tools. This little script will take the source/target data and create a gdf file you can explore with gephi or guess. This is a Wikipedia network surrounding the page on data visualization:

What is rather incredible is that I actually filtered the nodes with only one connection from the graph, going from 4995 to 690. Wikipedia is has become big. Very, very big.

An interesting insight to take from this graph is that many of the data visualization pioneers are placed at the center of the network, indicating that the field has grown and diversified from a limited set of initial concepts and experiments – something that can be easily confirmed by looking at the literature of the field where the same examples pop up regularly.

A visualization approach may be interesting for studying Wikipedia as a knowledge platform instead of a social experiment. While the attention given to forms of governance, contribution, etc. is certainly justified, we may want to take a closer look of the actual organization of knowledge on Wikipedia and how this compares to other forms of collecting knowledge.

The Official Google Blog has recently written about changes to the ranking procedure that were introduced after a NYT article wrote about an online retailer that had apparently found out that being nasty to your customers would help getting good search rankings because all of the complaints and bad user reviews would get you links and boost PageRank. While Google denies that this logic would work, they have added a ranking layer to their search results that specifically targets online merchants. The interesting thing about the blog post is that the author details several things that the company could have done but didn’t do while actually revealing very little about what the “algorithmic solution” they implemented actually consists of. From the post:

Instead, in the last few days we developed an algorithmic solution which detects the merchant from the Times article along with hundreds of other merchants that, in our opinion, provide an extremely poor user experience. The algorithm we incorporated into our search rankings represents an initial solution to this issue, and Google users are now getting a better experience as a result.

While I do not believe that transparency is the prime solution to the gatekeeper issues surrounding search, this paragraph really is strikingly vague. Has Google compiled a list of merchants that are systematically downranked? How is this list compiled? What does “in our opinion” mean? Is this “opinion” expressed in the form of an algorithmic procedure (one could imagine using the hReview microformat to collect reviews on merchants)?

We’ll probably not get any answers to these questions but the case really shows how murky the whole ranking thing really has become: in an always growing online world, search visibility has extremely important financial ramifications (despite the social media hype) and I believe that companies like Google will increasingly rely on human judgment as a complement to algorithmic procedures (which are just another form of human judgment BTW). This will certainly lead to more legal activity around ranking in the future because courts still understand human meddling a lot better than software design…

I just saw that the good people from sociomatic have prepared a nice little slideshow on how to use gephi to analyze social network data extracted from Facebook (using netvizz). This is a great way to start playing around with network analysis and the slides should really help with the first couple of steps…