Category Archives: statistics

“Of course, in the study of such complicated phenomena as occur in biology and sociology, the mathematical method cannot play the same role as, let us say, in physics. In all cases, but especially where the phenomena are most complicated, we must bear in mind, if we are not to lose our way in meaningless play with formulas, that the application of mathematics is significant only if the concrete phenomena have already been made the subject of a profound theory.“

A. D. Aleksandrov, A General View of Mathematics. In: A. D. Aleksandrov, A. N. Kolmogorov, M. A. Lavrent’ev, Mathematics: Its Content, Methods and Meaning. Moscow 1956 (trans. 1964)

While scholars often underline their commitment to non-deterministic conceptions of “effects”, models of causality in the human and social sciences can still be a bit simplistic sometimes. But a more subtle approach to causality would have to concede that, while most often cumulative and contradictory, lines of causation can sometimes be quite straightforward. Just consider this example from Commensuration as a Social Process, a great text from 1998 by Espeland and Stevens:

Faculty at a well-regarded liberal arts college recently received unexpected, generous raises. Some, concerned over the disparity between their comfortable salaries and those of the college’s arguably underpaid staff, offered to share their raises with staff members. Their offers were rejected by administrators, who explained that their raises were ‘not about them.’ Faculty salaries are one criterion magazines use to rank colleges. (p.313)

This is a rather direct effect of ranking techniques on something very tangible, namely salary. But the relative straightforwardness of the example also highlights a bifurcation of effects: faculty gets paid more, staff less. The specific construction of the ranking mechanism in question therefore produces social segmentation. Or does it simply reinforce the existing segmentation between faculty and staff that lead college evaluators to construct the indicators the way they did in the first place? Well, there goes the simplicity…

When Lawrence Lessig famously stated that “code is law”, the most simple and striking example was AOL’s decision to – arbitrarily – limit the number of people that could log into a chat room at the same time to 23. While the social consequences of this rule were quite far-reaching, they could be traced to a simple line of text somewhere in a script stating that “limit = 23;” (apparently someone changed that to “limit = 36;” a bit later).

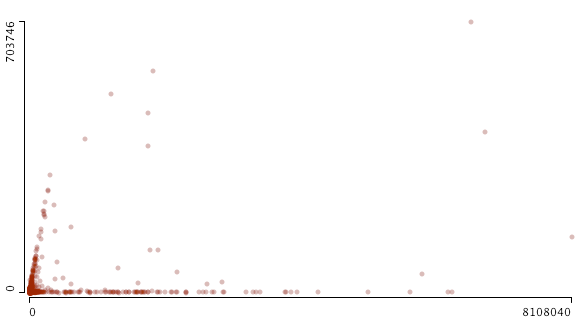

When starting to work on a data exploration project linking Web sites to Twitter, I wasn’t aware that the microblogging site had similar limitations built in. Somewhere in 2008, Twitter apparently capped the number of people one can follow to 2000. I stumbled over this limit by accident when graphing friends and followers for the 24K+ accounts we are following for our project:

This scatterplot (made with Mondrian, x: followers / y: friends) shows the cutoff quite well but it also indicates that things are a bit more complicated than “limit = 2000;”. From looking at the data, it seems that a) beyond 2000, the friend limit is directly related to the number of followers an account has and b) some accounts are exempt from the limit. Just like everywhere else, there are exceptions to the rule and “all are equal before the law” (UN Declaration of Human Rights) is a standard that does not apply in the context of a private service.

While programmed rules and limits play an important role in structuring possibilities for communication and exchange, a second graph indicates that social dynamics leave their traces as well:

This is the same data but zoomed out to include the accounts with the highest friend and follower count. There is a distinct bifurcation in the data, two trends emerging at the same time: a) accounts that follow the friend/follower limit coupling and b) accounts that are followed by a lot of others while not following many people themselves. The latter category is obviously celebrity accounts such as David Lynch, Paul Krugman, or Karl Lagerfeld. These brands are simply using Twitter as a one-to-many medium. But what about the first category? A quick examination confirms that these are Internet professionals, mostly from marketing and journalism. These accounts are not built on a transfer of social capital (celebrity status) from the outside, but on continuous cross-platform networking and diligent posting. They have to play by different rules than celebrities, reciprocating follower connections and interacting with other accounts to abide by the tacit rules of the twitterverse. They have built their accounts into mass media as well but had to work hard to get there.

These two examples show how useful data visualization can be in drawing our attention to trends in the data that may be completely invisible when looking at the tables only.

While there are probably a lot of people that have stumbled over the Google Ngram Viewer, it is safe to assume that fewer have read the paper (Science, January 2011) by Michel et al. that documents the project and gives a good idea of the kind of “big iron” science we can expect to capture quite a lot of attention over the next couple of years. According to the (14, one being “The Google Books Team”, another Steven Pinker) authors, the projet – fittingly termed culturomics – is based on a sample of 5,195,769 books, which apparently represents roughly 4% of all the books ever published. They easiest way to show the scope of what the researchers aim to do is quoting the abstract in full:

We constructed a corpus of digitized texts containing about 4% of all books ever printed. Analysis of this corpus enables us to investigate cultural trends quantitatively. We survey the vast terrain of ‘culturomics,’ focusing on linguistic and cultural phenomena that were reflected in the English language between 1800 and 2000. We show how this approach can provide insights about fields as diverse as lexicography, the evolution of grammar, collective memory, the adoption of technology, the pursuit of fame, censorship, and historical epidemiology. Culturomics extends the boundaries of rigorous quantitative inquiry to a wide array of new phenomena spanning the social sciences and the humanities.

Next to the sheer size of the corpus, there are several things that are quite remarkable with this project:

1) While the paper is full of graphs, it is immensely interesting that many of the measurements taken can be “reenacted” with the Ngram Viewer. In a passage that diagnoses “a greater focus on the present” in more recent publications, the authors show that the half-life (i.e. the number of years it takes for a date to get to half the frequency value of an initial peak) of dates gets much shorter over time. We can easily graph the result ourselves: This possibility to query the data ourselves (as well as the comprehensive data sharing) represents quite a change in how we can relate to the results as scholars and while only the most well-funded projects will be able to provide a “companion” data-tool, there is a real epistemological shift underway. From a teaching perspective, the hands-on approach may actually be even more valuable.

This possibility to query the data ourselves (as well as the comprehensive data sharing) represents quite a change in how we can relate to the results as scholars and while only the most well-funded projects will be able to provide a “companion” data-tool, there is a real epistemological shift underway. From a teaching perspective, the hands-on approach may actually be even more valuable.

2) We increasingly have very comprehensive available data sets that can be used as concept markers in very different contexts. In this case, the authors used 740.000 names of persons from Wikipedia to study different aspects of fame. But one could easily imagine using GeoNames to perform a similar survey of the ebb and fall of geographic prominence. I am quite sure that linguists will soon bring together the Ngram data with WordNet to study concept evolution and other things.

3) While the examples developed in the article are fascinating – and there will certainly be many more – the epistemological horizon is quite vague for the moment. There is no question that historical linguistics will have a field day plunging into the data, but the intellectual rationale behind the project of culturomics is a bit thin for the moment:

Culturomics is the application of high-throughput data collection and analysis to the study of human culture. Books are a beginning, but we must also incorporate newspapers, manuscripts, maps, artwork, and a myriad of other human creations. Of course, many voices—already lost to time— lie forever beyond our reach.

Culturomic results are a new type of evidence in the humanities. As with fossils of ancient creatures, the challenge of culturomics lies in the interpretation of this evidence.

I would argue that it is not so much the interpretation of evidence that represents a challenge but the integration of these new computer-based approaches into meaningful research agendas that ask non-trivial questions. While it may be interesting to be able to attach a number to the competence of Nazi censorship efforts, this competence was never very much in doubt and while numbers and graphs may confer an aura of scientific respectability, the findings will most probably not add anything to our understanding of national socialism.

While it is increasingly unpopular to cite Snow’s Two Cultures, this early proposal for a quantitative approach to culture (in its historic dimension) will give rise to all kinds of polemics, misunderstandings, and demarkation efforts. The public availability of a query tool is, however, a real reason for hope: humanities scholars will be able to try it out for themselves and with a bit of luck, we will have a broader view on its usefulness for cultural analysis in a couple of month.

What is a link? From a methodology standpoint, there is no answer to that question but only the recognition that when using graph theory and associated software tools, we project certain aspects of a dataset as nodes and others as links. In my last post, I “projected” authors from the air-l list as nodes and mail-reply relationships as links. In the example below, I still use authors as nodes but links are derived from a similarity measure of a statistical analysis of each poster’s mails. Here are two gephi graphs:

If you are interested in the technique, it’s a simple similarity measure based on the vector-space model and my amateur computer scientist’s PHP implementation can be found here. The fact that the two posters who changed their “from:” text have both of their accounts close together (can you find them?) is a good indication that the algorithm is not completely botched. The words floating on the links on the right graph are the words that confer the highest value to the similarity calculation, which means that it is a word that is relatively often used by both of the linked authors while being generally rare in the whole corpus. Elis Godard and Dana Boyd for example have both written on air-l about Ron Vietti, a pastor who (rightfully?) thinks the Internet is the devil and because very few other people mentioned the holy warrior, the word “vietti” is the highest value “binder” between the two.

What is important in networks that are the result of heavily iterative processing is that the algorithms used to create them are full of parameters and changing one of these parameters just little bit may (!) have larger repercussions. In the example above I actually calculate a similarity measure between each two nodes (60^2 / 2 results) but in order to make the graph somewhat readable I inserted a threshold that boils it down to 637 links. The missing measures are not taken into account in the physics simulation that produces the layout – although they may (!) be significant. I changed the parameter a couple of times to get the graph “right”, i.e. to find a good compromise between link density for simulation and readability. But look at what happens when I grow the threshold so than only the 100 strongest similarity measures survive:

First, a couple of nodes disconnect, two binary stars form around the “from:” changers and the large component becomes a lot looser. Second, Jeremy Hunsinger looses the highest PageRank to Chris Heidelberg. Hunsinger had more links when lower similarity scores were taken into account, but when things get rough in the network world, bonding is better than bridging. What is result and what is artifact?

Most advanced algorithmic techniques are riddled with such parameters and getting a “good” result not only implies fiddling around a lot (how do I clean the text corpus, what algorithms to look for what kind of structures or dynamics, what parameters, what type of representation, here again, what parameters, and so on…) but also having implicit ideas about what kind of result would be “plausible”. The back and forth with the “algorithmic microscope” is always floating against a backdrop of “domain knowledge” and this is one of the reasons why the idea of a science based purely on data analysis is positively absurd. I believe that the key challenge is to stay clear of methodological monoculture and to articulate different approaches together whenever possible.

Gabriel Tarde is a springwell of interesting – and sometimes positively weird – ideas. In his 1899 article L’opinion et la conversation (reprinted in his 1901 book L’opinion et la foule), the French judge/sociologist makes the following comment:

Il n’y [dans un Etat féodal, BR] avait pas “l’opinion”, mais des milliers d’opinions séparées, sans nul lien continuel entre elles. Ce lien, le livre d’abord, le journal ensuite et avec bien plus d’efficacité, l’ont seuls fourni. La presse périodique a permis de former un agrégat secondaire et très supérieur dont les unités s’associent étroitement sans s’être jamais vues ni connues. De là, des différences importantes, et, entre autre, celles-ci : dans les groupes primaires [des groupes locales basés sur la conversation, BR], les voix ponderantur plutôt que numerantur, tandis que, dans le groupe secondaire et beaucoup plus vaste, où l’on se tient sans se voir, à l’aveugle, les voix ne peuvent être que comptées et non pesées. La presse, à son insu, a donc travaillé à créer la puissance du nombre et à amoindrir celle du caractère, sinon de l’intelligence.

After a quick survey, I haven’t found an English translation anywhere – there might be one in here – so here’s my own (taking some liberties to make it easier to read):

[In a feudal state, BR] there was no “opinion” but thousands of separate opinions, without any steady connection between them. This connection was only delivered by first the book, then, and with greater efficiency, the newspaper. The periodical press allowed for the formation of a secondary and higher-order aggregate whose units associate closely without ever having seen or known each other. Several important differences follow from this, amongst others, this one: in primary groups [local groups based on conversation, BR], voices ponderantur rather than numerantur, while in the secondary and much larger group, where people connect without seeing each other – blind – voices can only be counted and cannot be weighed. The press has thus unknowingly labored towards giving rise to the power of the number and reducing the power of character, if not of intelligence.

Two things are interesting here: first, Lazarsfeld, Berelson, and Gaudet’s classic study from 1945, The People’s Choice, and even more so Lazarsfeld’s canonical Personal Influence (with Elihu Katz, 1955) are seen as a rehabilitation of the significance (for the formation of opinion) of interpersonal communication at a time when media were considered all-powerful brainwashing machines by theorists such as Adorno and Horkheimer (Adorno actually worked with/for Lazarsfeld in the 30ies, where Lazarsfeld tried to force poor Adorno into “measuring culture”, which may have soured the latter to any empirical inquiry, but that’s a story for another time). Tarde’s work on conversation (the first order medium) is theoretically quite sophisticated – floating against the backdrop of Tarde’s theory of imitation as basic mechanism of cultural production – and actually succeeds in thinking together everyday conversation and mass-media without creating any kind of onerous dichotomy. L’opinion et la conversation would merit an inclusion into any history of communication science and it should come as no surprise that Elihu Katz actually published a paper on Tarde in 1999.

Second, the difference between ponderantur (weighing) and numerantur (counting) is at the same time rather self-evident – an object’s weight and it’s number are logically quite different things – and somewhat puzzling: it reminds us that while measurement does indeed create a universe of number where every variable can be compared to any other, the aspects of reality we choose to measure remain connected to a conceptual backdrop that is by itself neither numerical nor mathematical. What Tarde calls “character” is a person’s capacity to influence, to entice imitation, not the size of her social network.

I’m currently working on a software tool that helps studying Twitter and while sifting through the literature I came across this citation from a 2010 paper by Cha et al.:

We describe how we collected the Twitter data and present the characteristics of the top users based on three influence measures: indegree, retweets, and mentions.

Besides the immense problem of defining influence in non trivial terms, I wonder whether many of the studies on (social) networks that pop up all over the place are hoping to weigh but end up counting again. What would it mean, then, to weigh a person’s influence? What kind of concepts would we have to develop and what could be indicators? In our project we use the bit.ly API to look at clickstream referers – if several people post the same link, who succeeds in getting the most people to click it – but this may be yet another count that says little or nothing about how a link will be uses/read/received by a person. But perhaps this is as far as the “hard” data can take us. But is that really a problem? The one thing I love about Tarde is how he can jump from a quantitative worldview to beautiful theoretical speculation and back with a smile on his face…

My colleague Theo Röhle and I went to the Computational Turn conference this week. While I would have preferred to hear a bit more on truly digital research methodology (in the fully scientific sense of the word “method”), the day was really quite interesting and the weather unexpectedly gorgeous. Most of the papers are available on the conference site, make sure to have a look. The text I wrote with Theo tried to structure some of the epistemological challenges and problems to take into account when working with digital methods. Here’s a tidbit:

…digital technology is set to change the way scholars work with their material, how they “see” it and interact with it. The question is, now, how well the humanities are prepared for these transformations. If there truly is a paradigm shift on the horizon, we will have to dig deeper into the methodological assumptions that are folded into the new tools. We will need to uncover the concepts and models that have carried over from different disciplines into the programs we employ today…

Over the last couple of years, the social sciences have been increasingly interested in using computer-based tools to analyze the complexity of the social ant farm that is the Web. Issuecrawler was one of the first of such tools and today researchers are indeed using very sophisticated pieces of software to “see” the Web. Sciences-Po, one of these rather strange french institutions that were founded to educate the elite but which now have to increasingly justify their existence by producing research, has recently hired Bruno Latour to head their new médialab, which will most probably head into that very direction. Given Latour’s background (and the fact that Paul Girard, a very competent former colleague at my lab, heads the R&D departement), this should be really very interesting. I do hope that there will be occasion to tackle the most compelling methodological question when in comes to the application of computers (or mathematics in general) to analyzing human life, which is beautifully framed in a rather reluctant statement from 1889 by Karl Pearson, a major figure in the history of statistics:

“Personally I ought to say that there is, in my own opinion, considerable danger in applying the methods of exact science to problems in descriptive science, whether they be problems of heredity or of political economy; the grace and logical accuracy of the mathematical processes are apt to so fascinate the descriptive scientist that he seeks for sociological hypotheses which fit his mathematical reasoning and this without first ascertaining whether the basis of his hypotheses is as broad as that human life to which the theory is to be applied.” cit. in. Stigler, Stephen M.: The History of Statistics. Harvard University Press, 1990 p. 304

Continuing in the direction of exploring statistics as an instrument of power more characteristic of contemporary society than means of surveillance centered on individuals, I found a quite beautiful citation by French sociologist Gabriel Tarde in his Les Lois de l’imitation (1890/2001, p.192f):

Si la statistique continue à faire des progrès qu’elle a faits depuis plusieurs années, si les informations qu’elle nous fournit vont se perfectionnant, s’accélérant, se régularisant, se multipliant toujours, il pourra venir un moment où, de chaque fait social en train de s’accomplir, il s’échappera pour ainsi dire automatiquement un chiffre, lequel ira immédiatement prendre son rang sur les registres de la statistique continuellement communiquée au public et répandue en dessins par la presse quotidienne.

And here’s my translation (that’s service, folks):

If statistics continues to make the progress it has made for several years now, if the information it provides us with continues to become more perfect, faster, more regular, steadily multiplying, there might come the moment where from every social fact taking place springs – so to speak – automatically a number that would immediately take its place in the registers of the statistics continuously communicated to the public and distributed in graphic form by the daily press.

When Tarde wrote this in 1890, he saw the progress of statistics as a boon that would allow a more rational governance and give society the means to discuss itself in a more informed, empirical fashion. Nowadays, online, a number springs from every social fact indeed but the resulting statistics are rarely a public good that enters public debate. User data on social networks will probably prove to be the very foundation of any business that is to be made with these platforms and will therefore stay jealously guarded. The digital town squares are quite private after all…